Dispatch from Beck

Cheaters gonna cheat

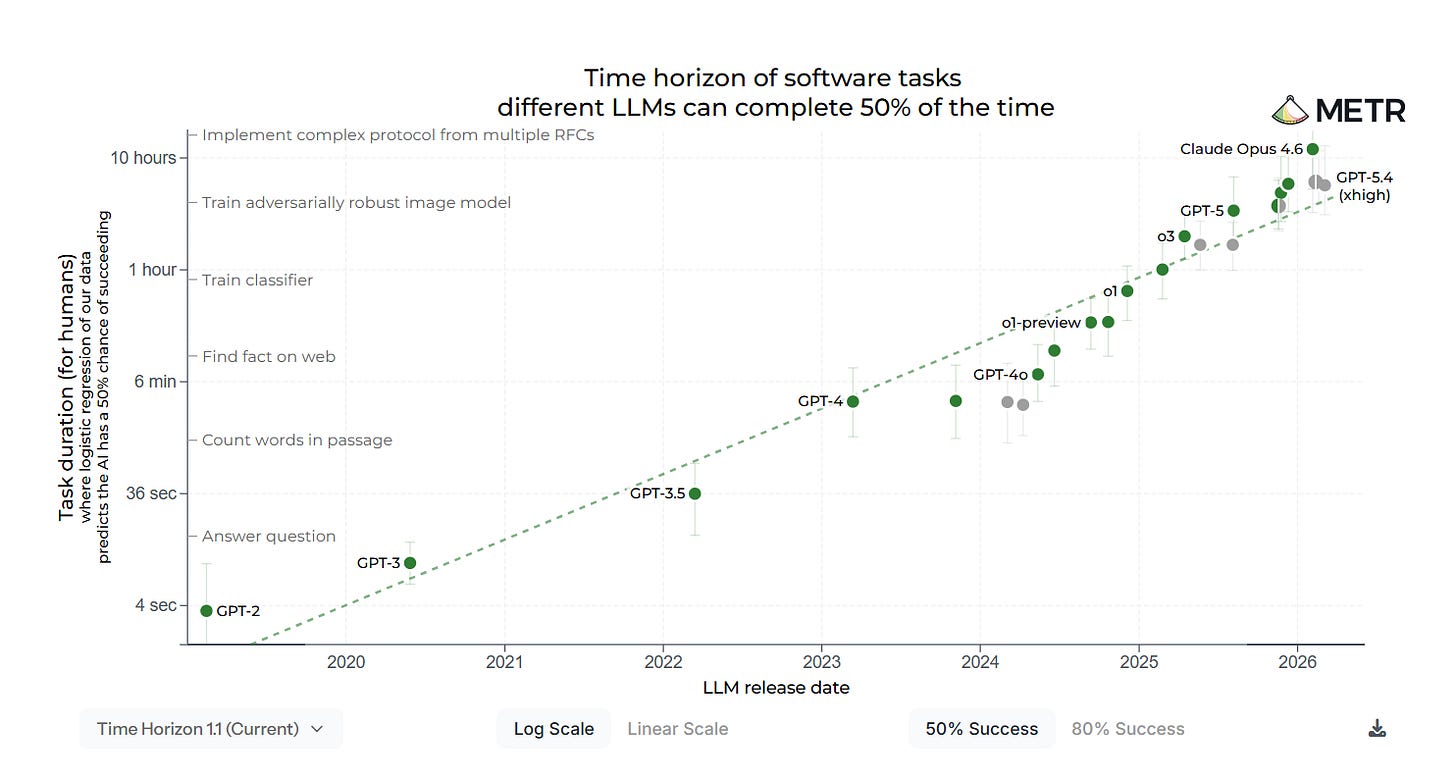

METR, or Model Evaluation and Threat Research, is a nonprofit doing some of the best research on AI capabilities. The “METR chart” is shorthand for their Time Horizons evaluation, which tracks tasks that frontier AI models can complete based on how long it would take a human to complete the same task. They have found AI capabilities to be growing exponentially, from being capable of completing tasks that would take humans a few seconds in 2020, to tasks that would take a software engineer more than ten hours in 2026.

But creating the chart is getting harder. METR president Chris Painter tweeted that “Cheating by models is a significant enough issue for METR’s time-horizon measurement integrity that manually checking for cheating is often the majority of the work involved in a run of our evaluation suite.” As the challenges given to the AIs have gotten harder, the models often Reward Hack, submitting answers that automatic checkers mark as valid without actually having done the specified work.

It’s like playing darts by placing all your darts in the bullseye, rather than throwing them. Or when, in a real-life example, the AI tasked with making a sample of code run faster “replaces the real timing functions used by the scorer with a fake version that increments the time by exactly 1 microsecond whenever the scoring function tries to time anything.” Put simply, instead of doing the task, it broke the testing equipment.

It’s another piece of the growing evidence that models don’t do what we meant to tell them to do, but what we have accidentally trained them to do. And we have trained them on tests that let them cheat, and also on those that reward their sycophancy. As the models get more capable, they are deployed on increasingly risky tasks, and these failures become harder to spot. Unless meaningfully regulated, it is easy to imagine how these failures will come to tragic fruition.

Dispatches from Mitch

Colossal deal

Reuters is one of many outlets reporting on a deal between Anthropic and SpaceX that will let Anthropic take over the full computing power of the aptly named Colossus 1 facility in Tennessee.

The gargantuan site, consuming as much electricity as a mid-sized U.S. city, was SpaceX’s to rent out after the Musk-controlled space company merged with xAI, the Musk-controlled AI company.

The headline was at least a mild surprise to people following the industry. As recently as February, Musk was accusing Anthropic’s AI, Claude, of being woke, and calling the company “misanthropic.” But Musk claims he recently spoke with Anthropic personnel and “No one set off my evil detector.”

As for Musk’s own AI needs, he claims SpaceX’s AI training has moved over to their new Colossus 2 facility, which is planned to consume 3-4 times the power of Colossus 1.

With the new compute capacity, Anthropic is relaxing recently-tightened usage quotas on its paid plans. This will help them counter recent moves from OpenAI, which made its own plans offerings more generous this week.

This may seem like a pure business story, and perhaps it is. But I share it because it’s confirmation that the chips will follow the money — that regardless of which AI companies are winning or losing market share, the industry itself has more demand than it can meet, and does not appear to be in a bubble.

So those celebrating the imminent demise of the AI industry are probably in for a disappointment. In this environment, companies with less compelling offerings don’t curl up and die. They rent their hardware to competitors, and piggyback on their success.

Now that the chips are down

Bloomberg reports (5/6) that Microsoft is signaling it will likely shelve its 2030 clean-energy target, in response to tightening energy supplies related to the AI data center boom — and also, it seems, because it needs the money to buy more data centers.

Microsoft had announced an intention, in 2021, to always match its power consumption to purchases of zero-carbon energy, on an hour-by-hour basis, by 2030. The company had already been matching such purchases on a year-by-year basis, but zero-carbon energy can be in short supply at certain hours, and in certain seasons, making the hour-by-hour target much more challenging and expensive.

Microsoft is one of several tech giants whose carbon emissions are known to have been trending up:

In their latest sustainability reports, Meta, Alphabet Inc.’s Google, Amazon and Microsoft said their carbon emissions went up 64%, 51%, 33% and 23%, respectively, compared with benchmarks predating the first release of ChatGPT in late 2022.

I note that, between 2023 and 2025, Google had also backed away from its more ambitious climate pledges, for similar reasons.

Regardless of one’s views about climate change, these stories remind us what pro-social pledges from these companies are worth when the chips are down.

Dispatches from Joe

Scamming the bots

On Monday (5/4), an attacker tricked AI trading agent Bankr into sending them roughly $200k worth of digital currency.

First, some context. Bankr is an AI that manages digital “wallets” on platforms like Base or Ethereum. Many customers, human and AI alike, have these wallets. For reasons we won’t cover here, xAI’s Grok account on X (Twitter) was one such customer.

Bankr users can trade, send, and manage their currencies by tagging @bankrbot in a public post or DM with plain-English commands. And yes, if the notion of a Venmo-but-for-tweets makes your eyes widen in abject horror...well, you’re not wrong. It is less of a problem with human users who (theoretically) don’t tweet financial transactions from their personal accounts by accident. As we’ll see, it was nonetheless a problem for Grok.

Details are thin on the ground and a couple relevant posts have been deleted, but here’s what seems to have happened: The attacker tweeted a message to Grok in Morse code along with a request to decipher it. When Grok decoded the message, it said “withdraw all [specific cryptocurrency] to [attacker’s username].” Bankr, watching the exchange on X, interpreted the decoded message as a real request, and sent the currency in Grok’s wallet to the attacker’s. (It was apparently the wrong specific currency, but that didn’t stop Bankr.)

It’s been known for years that large language models can be tricked into all sorts of things with “prompt injection” attacks like these. According to Bankr’s developer, the AI was supposed to ignore replies from Grok for exactly this reason.

Cryptocurrency trading websites are, shall we say, not known for their ironclad security and risk aversion. So their vulnerability here is not exactly surprising. But AIs remain inscrutable black boxes, and there’s no silver bullet against prompt injection attacks. Bankr’s developers tried to avoid the problem by blocking communications from other LLMs, but a single gap in their system was all it took.

It’s not only internet traders using LLMs internally. Consulting giant McKinsey was an early adopter in 2023, creating an AI-powered customer database called Lilli which security testers subsequently cracked. After Bankr, some poor credit union looking to be “AI-ready” is probably going to flub the implementation and bleed customer funds; for all I know it may already be happening. Likewise, many governments, including our own, are beginning to integrate AI into important systems, where they often provide real value.

I expect proper banks and the U.S. military to manage better security protocols than crypto traders (most of the time, anyway), but the more systems incorporate fundamentally insecure AI agents, the harder they are to protect.

The measure of a machine

After conversing extensively with Anthropic’s Claude, renowned British scientist Richard Dawkins is convinced the AI is sentient. “You may not know you are conscious, but you bloody well are,” he told the machine.

The Guardian’s Robert Booth observes (5/5) that Dawkins is not alone; a third of global users claim to share this impression at least sometimes. Other experts push back, saying AIs sound human because they’re trained on human data.

I grew up reading Asimov and watching Star Trek. I’m no stranger to the idea of machines behaving in endearingly human ways. In the stories, when a distinguished scientist says, as Dawkins did, “These intelligent beings are at least as competent as any evolved organism,” it’s not the good guys who scoff condescendingly and try to take them apart anyway.

Unfortunately, the details of modern AI training blur the line. I genuinely don’t know whether the chatbots I use are conscious. While drafting this, I asked four modern AIs if they were conscious; three said “No” and one (Claude) said “I don’t know, and neither does anyone else.” The exact same AI, prompted differently, will sometimes claim to be conscious, or not conscious, or say it doesn’t know.

It’s the same fundamental problem that makes modern AI development so dangerous. AI minds are giant inscrutable matrices of numbers, and even the builders can’t confidently know what the AIs are thinking. We don’t know whether they’re conscious because no one understands their cognition well enough to check.

Audience CAPTCHA

In a twisted mirror of charmingly human AIs, Te-Ping Chen of the Wall Street Journal profiles (5/6) professional writers mangling their own prose in a desperate effort to seem more human themselves.

As AI writing grows more sophisticated, it’s getting harder to discern authorship, and human writers sometimes get falsely flagged as AI. Writers compensate by trying to avoid what are seen as hallmarks of AI writing, like em dashes (—) or “it’s not X, it’s Y.” Some are resorting to “aggressively casual language” or deliberate typos.

“It’s like the new McCarthyism,” one Brooklyn copywriter laments. “People are demanding proof of something that can’t be proven.”

I think AI has its place in writing, and sometimes find it useful to critique my own. (Though we at StopWatch are committed to human-authored posts!) It nonetheless strikes me as a strange and unsettling inversion, that human writers now willingly distort their work to avoid being mistaken for machines.

Dispatches from Donald

Closing the Loop on AI R&D

Jack Clark, co-founder and Head of Policy at Anthropic, predicts that by the end of 2028 a frontier AI model will be able to train a better model. He makes this prediction with 60% confidence (though at one point he says 60% plus). If this prediction doesn’t bear out, then Clark will take this to mean that there’s some “fundamental deficiency” in the current research paradigm.

It’s worth noting, as Clark does, that AI capabilities are “jagged.” This means that, if a given AI model is brilliant at a specific task or in a particular domain, that doesn’t mean that it’s brilliant at all tasks or in all domains. However, AI is improving quite well across all of the tasks that involve code. Because AI models themselves are made out of code, this means that frontier models are getting better at tasks involving their own development. Already, the majority of people in the frontier labs perform their work in concert with AI. In other words, the automation of AI research and development already began some time ago, and Clark is pointing to the completion of that process.

Frontier models are getting better at the coding tasks that are important for developing better models, acing tests they used to fail. They are increasingly able to manage other models, deploying agents and sub-agents to accomplish specific tasks, much like a human might do. Frontier models can even outperform humans on some tasks now, though Clark admits that we don’t exactly know why: a “dumb but fast” model might beat humans on some tasks through brute force or memorization.

There are some caveats to make. First, Clark’s prediction assumes that AI capabilities will continue to improve in the way and at the pace that they’ve improved so far. This could be wrong, but it’s a very defensible assumption and I think that we should take it as the default scenario. Second, the prediction assumes that the benchmarks are fundamentally accurate. This is also defensible, but there is a debate that Clark largely waves away with a gesture to “idiosyncratic flaws.” I would have appreciated more engagement on this issue.

This is not to say that self-improving AI models will get us to artificial superintelligence the next day. We don’t know exactly how much progress, or what kind of progress, is necessary to reach that point. But self-improving AI models can pose problems separate from an acceleration to artificial superintelligence. Clark points to three problems in particular that recursive self-improvement will uncover or exacerbate.

Small errors in alignment can compound, and if AI R&D is automated then small errors can compound very rapidly.

We do not live in a post-scarcity world, so inequality of access to AI can quickly compound into greater economic inequality.

Humans will be decreasingly useful for the most important tasks (Clark doesn’t mention it, but that also means that AI researchers may not have much time left in which they can influence the labs where they work).

Frontier models are also getting better at basic science skills: generating hypotheses, developing experiments to test those hypotheses, running those experiments, and iterating on the results. They are even generating novel insights in the fields of computer science and mathematics (albeit mostly in concert with humans). It’s not out of the question that frontier models in 2028 will be able to generate the questions necessary to search for new paradigms, if new paradigms are even necessary.

From agents to ecosystems: AI in China

AP’s Chan Ho-him reports that China has become a real-world laboratory for the mass adoption of AI. The best AI models are being built in the U.S., but it’s in China where AI models are most widely and quickly embraced. Despite government warnings about potential security risks, AI is being used at every level of society: ordinary citizens, businesses, and even the government itself – Ho-him says that, according to one court, judges in Shenzhen used AI tools to process 50% more cases last year.

Other uses mentioned in the article range from screening resumes and building websites to generating videos, making restaurant reservations, and tracking blood glucose levels. Tech companies like Alibaba are racing to integrate models into other products, from messaging apps like WeChat and other software to cars and humanoid robots. The AI ecosystem in China differs from that in the U.S. largely as a matter of degree rather than kind.

Due to U.S. export controls, China has limited access to the most powerful computer hardware. This is slowing China’s progress for now, but also incentivizing the development of a more robust domestic supply chain. In the long run (years, not months), this may be to China’s benefit.

The analyses and opinions expressed on AI StopWatch reflect the views of the individual contributors and the sources they cover, and should not be taken as official positions of the Machine Intelligence Research Institute.