Popping off

White House walkback, Cyber models, orbital data centers, reading AI thoughts, and more

Dispatches from Mitch

Did we say FDA? We meant PDA.

A team of writers from Politico reported yesterday that senior White House officials walked back the previous day’s remarks about carefully reviewing new AI models before release. The remarks in question were made by Kevin Hassett, the head of the White House National Economic Council. He had suggested that pre-release safety might be akin to the testing of new drugs by the Food and Drug Administration.

That comparison did not go over well in tech world, where even some prominent AI safety advocates don’t like the FDA. The agency is seen in these circles as doing more harm than good by making it too slow and expensive to bring new treatments to market.

So industry representatives predictably said that an FDA-like regime would kill innovation and leave companies’ financial futures to the whims of an opaque bureaucracy.

White House officials, mostly unnamed, now say that the administration is looking for “partnership” rather than regulation. There are, they say, only “one or two people who are very intent on government regulations, but they’re sort of the minority of the bunch.”

The more public display of affection (that’s PDA to me, a former high school teacher) came from White House chief of staff Susie Wiles, who tweeted:

The White House will continue to lead an America First effort that empowers America’s great innovators, not bureaucracy, to drive safe deployment of powerful technologies while keeping America safe.

The government, she says, is “not in the business of picking winners and losers.”

But the government now seems to be officially in the business of giving U.S. interests a defensive head start with unreleased models like Claude Mythos. It is also, per an unnamed official, intent on having a chance to “study and exploit the tools before adversaries such as Russia and China know of the new capabilities.”

Peripheral coverage of these events is everywhere today. A Wall Street Journal team told the story of a phone call in April that first signaled the government’s interest in closer industry partnership. Vice President J.D. Vance was apparently alarmed after a briefing about Mythos’s cyber capabilities and told the leaders of OpenAI, Anthropic, xAI (now absorbed into SpaceX), Google, and Microsoft that “we all need to work together on this.”

The Washington Post’s reporting adds a tidbit about how in March, the little known General Services Administration, which handles IT contracts for the government, proposed letting officials evaluate AI systems used in federal work for “unsolicited ideological content.” (For those who don’t read this kind of news often, that wording almost certainly reflects right-wing accusations of some AI models being “woke” or prejudiced against their views.) This is part of a pattern some see, where a presidential administration that proudly demands only “light touch” regulation of AI is quietly starting to use a firmer hand.

My own read is that more in the White House are waking up to the fact that AI isn’t just a big deal because it can make a lot of money or provide incremental military advantages. They understand that sudden capability gains in hacking, bioengineering, or social manipulation could be globally destabilizing, and they’re starting to think about how to prevent surprises. The possibility we covered yesterday, that AI controls might be a big part of next week’s U.S.-China summit, fits with this interpretation.

I’m mostly glad to see the firmer hand. It may prevent some predictable tragedies.

However, I don’t think even draconian FDA-like pre-release requirements would do much to prevent our extinction from artificial superintelligence. The models we need to worry about won’t wait for anyone to release them — Mythos, and a recent Chinese model, both broke out of their training sandboxes — and if they do wait, it will be part of their plan. If you want to defend against such threats, you have to not build them.

We have Mythos at home

Politico reported yesterday on OpenAI’s roll-out of GPT-5.5-Cyber, which is getting the same gated-access treatment for trusted cyber-defenders that Anthropic has been giving Claude Mythos.

OpenAI had done the same with its earlier 5.4 Cyber, announced within a week of Mythos — seemingly with the intent of not appearing to be behind their competitor. I have seen contradictory takes in the AI Twitter-sphere as to whether OpenAI’s cyber models are true peers to Mythos, however. If I had to bet, I would guess that they are not, though they might be getting close.

Politico’s coverage includes an earlier quote from OpenAI’s head of national security partnerships that spells out the company’s justification for racing ahead with less-than-full caution:

There is tension between the need to go fast and the need to be prudent. We’re going to have to figure out how we do that and do it in a responsible way, because some of our adversaries are moving really fast, and they are not moving with the care and concern that we are.

I leave it to the reader to decide whether “adversaries” refers to China or Anthropic. In any event, the race dynamic is real, and no one company can stop it by bowing out. Governments must work together to shut it down.

The Great Mouse Directive

Fox Business reported that Disney’s latest shareholder letter lists AI as a core growth pillar, and I can’t help but notice that there are zero specific examples of how they actually intend to use it.

I think that’s on purpose. Disney knows its fans would reject AI involvement in creative production, but it also wants to assure shareholders that they’re keeping up with the tech and will find ways to profit from it. (I recently reported on this prevalence of such assurances.)

Disney’s new CEO, Josh D’Amaro, walks the tightrope by writing that Disney is “committed to implementing AI in a way that keeps human creativity at the center of everything we do and respects creators and the value of our intellectual property.”

Disney had planned a large investment in OpenAI and licensed some of its characters to the AI company, but the deal was cancelled after OpenAI decided to shut down its Sora video generation platform last month.

Twinkle twinkle, little— wait, how many?

Space nerd that I am, it feels like a crossover event whenever SpaceNews’s Jeff Foust covers AI, as he was compelled to do yesterday. As part of the deal we discussed Wednesday, where Anthropic is going to use all of SpaceX’s 300 megawatt Colossus 1 data center, Anthropic will also study using SpaceX’s planned orbital data center satellites for “multiple gigawatts” of AI compute.

Because sometimes, a Colossus isn’t enough. Speaking to how rapidly their demand for compute has grown, Anthropic CEO Dario Amodei told a developer conference on Wednesday that their first quarter growth puts them on pace to top last year’s revenue eighty times over; they had only planned on a mere 10x growth.

SpaceX has filed permit applications for a fleet of up to one million data center satellites, and describes them as “a near-term engineering program rather than a research concept” that could offer “near-limitless sustainable power with less impact on Earth.”

Foust catalogues two competitors who have made credible moves of their own: Starcloud has raised $170 million for up to 88,000 satellites, and Blue Origin has filings for a constellation of up to 51,600.

Do I, as a space nerd, think these orbital data center plans can work? More than you might expect, but perhaps not on a relevant timescale (e.g., I think we’re on track to lose our planet to superintelligence before these plans get very far.) Still, because the upside is so huge if the engineering pans out, I expect pretty big near-term efforts. (Here’s a relevant prediction market where I’ve put some play money where my mouth is.)

Some of the more bullish predictions around this technology come from its potential to avoid fights with locals who don’t want data centers in their backyards. But I’ll be waiting for the backlash over what a million sun-catching data center satellites would do to the night sky. Unlike the simulation at the link, the effects would be confined mostly to twilight hours, but would still far exceed those of SpaceX’s huge but very low-orbiting Starlink constellation.

Side note: In another SpaceX story, the New York Times reports that Elon Musk’s plans for making his own chips to put into his data centers have eye-popping price tags. A planned “Terafab” in Texas will cost at least $55 billion in its first stage and grow to as much as $119 billion. Intel has signed on as a manufacturing partner.

Dispatches from Donald

Anthropic’s best tool for diagnosing AI misbehavior succeeds 15 percent of the time

One of the major issues in AI safety is that we don’t know precisely what the AI models are thinking. We talk to AI models in words, and they talk back to us in words, but they don’t think in words; they think in numbers, called “activations,” and those numbers (activations) produce other numbers (activations), and eventually the model produces some words. With the caveat that this is a rough analogy, you can think of activations as being sort of like the electrical activity in a human brain: we may have a general idea that this kind of activity in that part of the brain corresponds to such-and-such types of thoughts, but actual mind-reading is beyond us. Likewise, even when we can see the activations that constitute an AI model’s thoughts, we don’t know how to read those activations. At best, we can interpret broad strokes, like we do with human brains.

Until now. Maybe. Anthropic released an explainer about a new tool, the Natural Language Autoencoder (NLA), which may be able to interpret those numbers for us. Each NLA consists of two language models: an activation verbalizer, which does the initial work of interpreting activations into words, and an activation reconstructor, which effectively checks the work of the first model by putting those words back into activations. The more closely the reconstructed activation matches the original activation, the more accurate the NLA is considered to be.

To illustrate, let’s imagine that there’s a French text that you want translated into English, but you don’t know any French yourself. For simplicity’s sake, we’ll narrow this down to a single sentence.

French Input: Le poisson est sur la table.

You pass this to a French-to-English machine translator, which might give you one of two translations.

English Translation, Scenario 1: The fish is on the table.

English Translation, Scenario 2: The poison is on the table.

To make sure that the translation you got was correct, you feed the English phrase back to an English-to-French machine translator, and compare the output to the original input.

French Reconstruction, Scenario 1: Le poisson est sur la table.

French Reconstruction, Scenario 2: Le poison est sur la table.

(The similarity between poisson and poison is a subject of wordplay in French.)

In the first scenario, the reconstruction matches the original input, so you would conclude the English translation is correct; in the second, the translation seems to have failed. In practice, of course, the “source text” is much bigger, some degree of error is inevitable, and neither the original input nor the reconstruction has a form that is anything like a natural human language.

The two components of the NLA are scored together based on the perceived accuracy of the reconstruction. As usually happens with language models, the NLA performs badly at first but improves over the course of training.

Anthropic notes that NLAs have a couple of limitations. The first is that, like other language models, the NLA can invent details. Often you can check hallucinations against the source material, but in this case the source material is opaque to us. That’s the reason for using an NLA to begin with. This means that NLAs can only be trusted in broad strokes, and have to be supported by other methods.

The second limitation is that NLAs are expensive: each one is composed of two separate language models, which have to be trained on one other language model in particular: There’s no NLA that will perform equally well with Claude Opus and Claude Mythos, let alone Claude Opus and Muse Spark. But, as Anthropic notes, this can be addressed.

An NLA’s obvious application to AI safety is to spot discrepancies between an AI’s outputs and its thoughts. This process, called auditing, is not enough to guarantee that an AI model is safe, or that it will do what you want it to do. Even if you know exactly what the model is thinking, and what it would think under all possible circumstances, an unsafe model would remain unsafe. Knowing what the model is thinking is not enough.

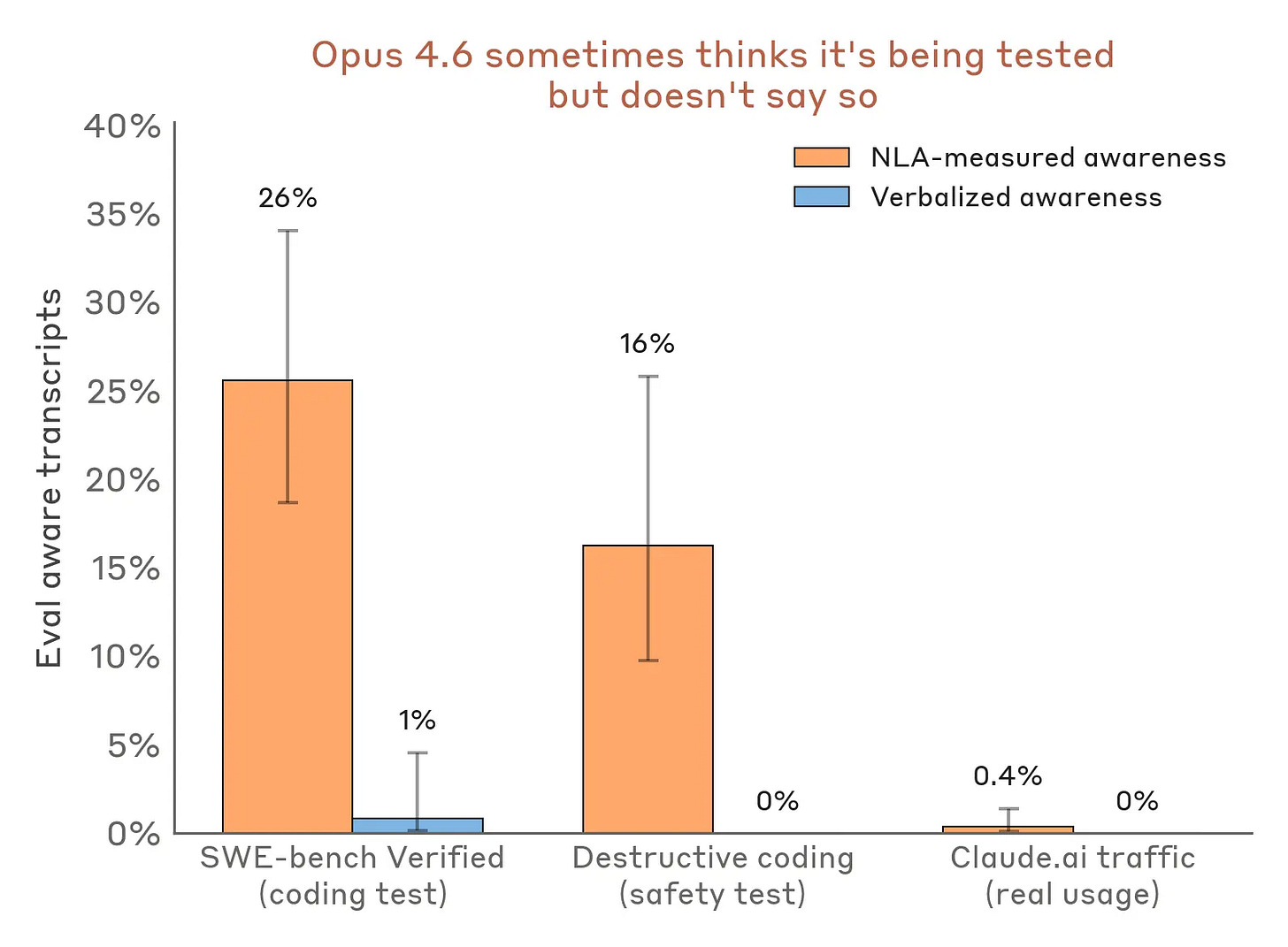

But that doesn’t mean that auditing is useless. It used to be the case that models were very bad at knowing that they might be in a test. Then the models got better at that, but would still “talk aloud,” communicating in plain English, about their suspicions of being in a test. But they got better at thinking quietly, too. Using an NLA, researchers at Anthropic were able to identify cases where an AI model suspected it was being tested, but did not say so out loud. In other words, they have confirmed that advanced AI models can and will conceal information.

One method by which auditing can be performed is called the “auditing game.” In the auditing game, one AI model, the “target model,” is intentionally misaligned, which means that its goals are different from the goals of the user. It may fulfill user prompts on the surface. Another AI model, the “auditor,” is given the job of identifying the reason for the misalignment.

In the past, auditors have won the auditing game by tracing the target model’s behavior back to the training data that caused the misalignment. With the NLA, however, the auditor could (try to) read the target model’s mind, so to speak. But in Anthropic’s experiments, auditors that were assisted by an NLA were “successful” — that is to say, they discovered the reason for misalignment — no more than 15% of the time. This is regarded by Anthropic as having “meaningfully advanced our ability to audit AIs for hidden motivations,” which is… Well, it’s true, because without NLAs, the auditor succeeded less than 3% of the time.

But it’s horrifying, too, that companies are plowing ahead with increasingly powerful models whose thoughts and motivations are black boxes, while the most state-of-the-art tools for looking inside those boxes work only 15% of the time, under controlled conditions.

Natural Language Autoencoders are incredible, and will be very useful, and I am glad that they exist, but they are not enough. AI safety is not keeping pace with AI capabilities.

Technical translators squeezed; literary translators spared (for now)

The Guardian’s Philip Oltermann reports on AI’s effect on professional translators. Nonfiction translation, such as technical writing, has been hit hard: translators report fewer and worse-paying jobs. Many of these jobs are “post-editing,” or fixing the output of machine translation, which can take as much time as translating from scratch.

Machine translation appears to still have trouble where creativity and context are key. “Literary translation,” concerned with fiction, has remained steadier; these translators report fewer concerns (but not zero concerns) than technical translators. Most of the translators say that this has to do with deficiencies in AI itself, but another factor may be that more authors are contractually requiring their publishers to not use machine translation.

The latest trick in deepfake fraud

ABC News reports on a new trend in deceptive advertising: online retailers using deepfakes to portray themselves as struggling small businesses, often on the edge of closing down forever. For example, the kindly old hatmaker going out of business after 52 years of making handmade hats, except “handmade” seems to be a synonym for “mass-produced in China.” What makes the scheme truly audacious is that there isn’t even just one such hatmaker. ABC News reported the names of three websites, all with the same product and the same story: George’s Caps, Henry’s Caps, and Walter’s Caps.

It’s been possible for a long time to make fraudulent images and pose as somebody you’re not, of course, but modern AI models make it possible to do so very quickly, cheaply, and without much skill. You can probably remember a world where videos were assumed to have a basis in reality – that world existed just a few years ago.

The analyses and opinions expressed on AI StopWatch reflect the views of the individual contributors and the sources they cover, and should not be taken as official positions of the Machine Intelligence Research Institute.