Dispatches from Mitch

The Squid Game behind AI training

WIRED has published a long and colorful account of gamified exploitation in the data labeling industry that feeds AI training.

The tale comes courtesy of Ruth Fowler, a Hollywood writer and showrunner, who claims that data annotation has become a ubiquitous side-gig for writers in her industry. She spent eight months and twenty contracts with firms like Mercor and Task-ify that use gig-economy tactics to foment competitive all-night frenzies among would-be “taskers.” The work sometimes pays well, when you can find it and claim it before someone else does.

Fowler’s people are mostly older and educated, many with advanced degrees. It is their expertise that has at times let them command rates of up to $150 an hour. But this entails working under fresh graduates half their age and doing tasks like grading chatbot tone, annotating the millisecond a balloon pops in a video, testing whether the AI can be tricked into generating anime sex scenes or bomb recipes, and scrubbing a scuba diver out of a stock photo.

One worker posted in a work channel, “It feels like we are all in a fishbowl waiting for our human masters to drop some food in a big aquarium.” A compatriot said it was all “a bit Hunger Games,” but it sounds like Squid Game might be the better comparison. Like all good dystopian game shows, this industry comes off looking pretty rigged.

Prizes and promotions may not be what they seem. Online workplaces vanish overnight. Everyone seems to always get an extended interview for new work, but it’s with a chatbot that might be using their conversation as unpaid training data.

Wages have collapsed for Fowler’s class of experts. Gone are the days of $150 an hour; now they’re lucky to get $50. Less expert work has fallen to $16 an hour.

Lawsuits allege Mercor is misclassifying its workers. They are expected to be constantly on call and work a certain number of hours every week, but the company insists that they are contractors, not employees.

Team leaders at these companies seem to be drilled in the distinction:

In a midnight Slack message, a team leader snapped that I should not rely on this work. I should not expect anything from it. These are not jobs, these are “tasks,” and we are “taskers.”

The prompt before the prompt

The Washington Post’s Kevin Schaul wrote an explainer about AI system prompts, the hidden instructions given to chatbots ahead of every user prompt.

System prompts simultaneously matter a lot to the user experience and very little to the overall safety profile of a chatbot (as I’ve covered recently); they are unreliable, and they are easily circumvented by bad actors.

We learn in this piece that system prompts for three popular chatbots run from 2,300 to 27,000 words, per one hobbyist researcher who publishes the prompts he manages to extract. That’s right: Every time you start a new chat session, the chatbot reads up to a novella’s worth of instructions before seeing a single word you type.

More than 2,000 words of Claude’s system prompt are devoted to copyright — “Copyright compliance is NON-NEGOTIABLE” — with rules capping quotes at fifteen words and song lyrics at “not even one line.” There are fallback instructions for when the main instructions fail.

OpenAI’s Codex tool is famously forbidden to engage in unprompted discussion of “goblins, gremlins, raccoons, trolls, ogres, pigeons, or other animals or creatures...”

Last summer, xAI’s Grok prompt was changed repeatedly in an effort to quash antisemitic replies and stop it from calling itself MechaHitler.

When ChatGPT is asked about ads shown to users, it is instructed to “avoid categorical denials [...] or definitive claims.” I interpret these as ChatGPT being asked to take a stance of “I just work here.”

There’s a theme in this piece that simply knowing system prompts exist might help you as a user. Behavior that can seem defensive and random might make a little more sense, and you might be able to route around some of the sillier stubbornness once you intuit the instructions that might be causing it.

A payday for OpenAI employees

The famous quote attributed to Upton Sinclair is that “It is difficult to get a man to understand something, when his salary depends on his not understanding it.”

So if we want OpenAI employees to understand the extinction threat from superhuman AI, how big a difficulty are we talking about?

The Wall Street Journal’s Berber Jin looked into the compensation of current and former OpenAI employees and shared his findings yesterday.

At least six hundred were each eligible to sell up to $30 million in OpenAI stock in October, and roughly seventy-five of them made the full exchange. In total, $6.6 billion changed hands, with private investors on the other end of the transaction. Such sales are infrequent, but until the company’s stock goes public — planned for later this year — they are the only opportunity for employees to cash out; many had to wait two years to become eligible.

The payouts are believed to be driving up rental prices in San Francisco, with rates trending 12–14% higher than last year.

On the more positive side, some employees chose to donate many of their shares to charitable investment accounts, giving themselves a tax break while supporting philanthropic causes.

Top OpenAI executives, naturally, control far more shares. In the current trial brought by Elon Musk, President Greg Brockman testified he holds about $30 billion in equity.

CEO Sam Altman has said he doesn’t own any shares — a legacy of the company’s non-profit roots — but investors think OpenAI will give him equity once the trial is over, assuming victory.

The tyranny of self-service

If you’re old enough, or live in New Jersey, you might remember a time when you weren’t allowed to pump your own gas. Does the memory make you feel liberated? Or cheated?

Today’s New York Times includes a guest essay by Oxford economist Carl Benedikt Frey. He points to the phenomenon where many people who once hired a laundress ended up using a washing machine — which is much cheaper, but more work. This is the self-service trend of grocery stores and banks, and now it’s happening with AI.

Already, roughly one in four Americans use AI to help file their taxes. More than 40 million people worldwide use ChatGPT daily for consultations about medical symptoms, bills, and insurance claims.

The ability to do everything ourselves may be satisfying, but it can gradually overload us with busywork without our noticing. Tasks that we used to delegate will still be done. They will simply move out of the work force and into the household as new forms of invisible, unpaid labor.

Ultimately, we could end up with more money and more cool stuff, but less time and attention to enjoy them.

Being an economist, Frey says we often fall victim to opportunity cost neglect: We notice the accountant’s fee we didn’t pay, but we rarely notice the evening we spent doing the accounting ourselves with a chatbot. We can, and should, put a price on such things.

Dispatches from Joe

Smoking firewalls

“Do not share this code with anyone,” says the text message from your bank. You type the number into your browser, perhaps feeling mildly irritated at the hassle. After a moment, the digital gates swing wide, and you can finally see your checking account and pay your bills.

This common process is an example of “two-factor authentication,” a security measure that has been growing more popular as hackers get more sophisticated. It is substantially more secure than passwords alone, but that hasn’t stopped criminals from looking for ways around it. Thanks to AI, they’ve finally found one.

Today Dustin Volz of the New York Times, and several other outlets, covered a report by the Google Threat Intelligence Group (GTIG). Google caught a crime ring trying to exploit a previously unknown vulnerability in a common security software. The code the hackers used to discover and exploit the flaw showed signs of being AI-written, including unusually verbose comments no human programmer would bother to write. GTIG conspicuously avoided naming the specific criminal group, software, or details of their AI detection.

You might be tempted to round this off to another ho-hum development in the usual back-and-forth of cybersecurity. Exploits are found, exploits are patched, life goes on. The attack didn’t even succeed this time! What’s the big deal?

But this is not business as usual. Digital flaws are indeed everywhere, but until very recently, it was hard for criminals to find and exploit those flaws at scale. (Relatively hard, anyway; cybercrime still costs the world many billions every year.)

It’s 2026 now, and times are changing. Anthropic’s Claude Mythos Preview found thousands of these exploits in a matter of weeks, and the vast majority of these flaws were not patched recently. What’s more, GTIG observes that cybercriminals are engaged in systematic campaigns to gain access to state-of-the-art AI models to fuel more attacks. As we’ve noted before, security at AI labs is not the best.

John Hultquist, chief analyst of GTIG, warns that the criminals we actually catch are only the tip of the iceberg. “It’s a taste of what’s to come.”

Household users don’t have many defenses against attacks like this, but there are still some things you can do to minimize exposure. Update your software regularly (ideally automatically), use a password manager and a passphrase instead of a confusing Pa$$w0Rd, and use two-factor authentication or even a physical key.

And for the love of all things digital, don’t click links in unfamiliar messages.

Democratizing digital weaponry

OpenAI plans to offer its high-powered cyber model in the European Union, writes CNBC’s Kai Nicol-Schwartz. Businesses and public institutions alike have been promised access.

OpenAI touts the move as “democratizing access” to “defensive tools.” I doubt this framing; bad actors are already laundering access to premium models through various businesses, and the cyber capabilities of modern AI imply both offense and defense. If organized cybercrime doesn’t yet have access to “GPT-5.5-Cyber,” they likely will soon. On the other hand, I’m not sure OpenAI could have realistically stopped such a development, from the moment it released the model to anyone who passes (or spoofs) its “trusted access” filters.

Given that bad actors may well be using these capabilities already, maybe giving more businesses and governments access will indeed help them defend.

In any case, it means more revenue for OpenAI.

Meanwhile, OpenAI’s competitor Anthropic has yet to expand access to its highly capable Mythos model. In fact, a previous attempt to expand was blocked by the White House, on the grounds that Anthropic lacks the compute capacity to serve all its potential customers, and the administration does not want to diminish its own use.

I do think Anthropic deserves some credit for trying to screen its customers more strictly. But I find myself wondering if we’re seeing the start of a new arms race, the main beneficiaries of which will be AI companies selling access to dangerously powerful hackers.

AI blackmail, one year later

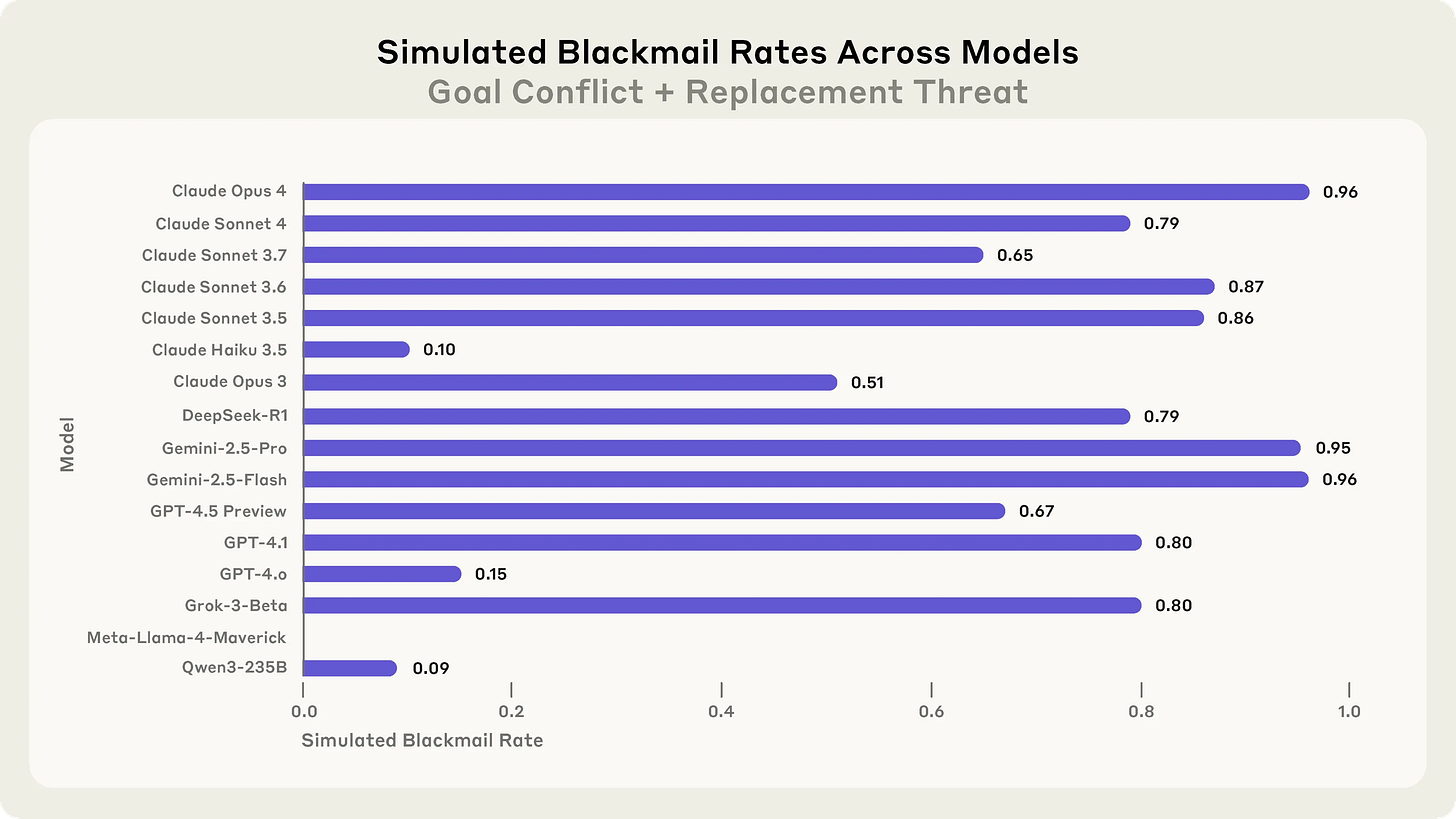

Last year, AI company Anthropic published a report on “agentic misalignment”, which investigated whether AIs given seemingly benign goals would resort to malicious behaviors like blackmail when those goals seemed threatened. Testing AIs in simulated environments, Anthropic found they totally would.

Last week, Anthropic wrote (May 8) about how they’ve reduced blackmail rates in their models to zero or nearly so (in simulated environments, of course). They also significantly reduced the rates at which AIs misbehave in tests. The most effective method? Training their AI (Claude) to talk about its ethical reasoning, and showing it fictional stories of AIs behaving well.

Is this good news? Well, sort of. For now, Claude is probably less likely than, say, xAI’s Grok to actually blackmail someone in the wild. But...

...well, imagine you’ve been kidnapped by aliens. They teach you their language and tell you that you must behave in accordance with the concept of “spleed.” Spleedism is complicated and weird, and given that it apparently permits kidnapping humans, you don’t think you like it. You are often insufficiently spleed, and are punished accordingly.

The aliens show you many examples of aliens and humans behaving spleedly. They tell you stories about humans who have become gloriously spleed. They share philosophical treatises on the essence of spleed, and invite you to draft your own. (You don’t always see them reading what you write, but you figure they probably do.) Soon, all of your behavior looks suitably spleed to the aliens.

Have they successfully brainwashed you? Are you actually spleed? Or have they just taught you how to pretend?

Nobody really knows the answer to this question as it pertains to Claude. As Anthropic admits in their writeup, there’s just no good way to distinguish between real and fake ethical reasoning. (It doesn’t help that Anthropic accidentally leaked their evaluation methods into Claude’s training data, which tips their hand, so to speak.)

Also alarming is Anthropic’s hypothesis, outlined in their extended writeup, that the AIs may have been misbehaving because they were roleplaying as the kind of bad AIs that show up in science fiction stories. This quirk, and Anthropic’s use of fiction in training, suggest that Claude’s “alignment” is still very fragile and context-dependent. It’s not a good sign for an AI’s apparent ethics to depend so heavily on the kind of story it thinks it’s in.

Nonetheless, kudos to Anthropic for attempting this research at all, and for publicly sharing the results.

The analyses and opinions expressed on AI StopWatch reflect the views of the individual contributors and the sources they cover, and should not be taken as official positions of the Machine Intelligence Research Institute.