AI may push us to extinction, but in the meantime there will be great jokes.

Maher, Anthropic at the White House, METR, and more

Dispatches from Mitch

So serious you have to laugh

News comedian Bill Maher devoted a 10-minute monologue to the AI extinction threat and the people creating it.

When the people who are making AI are scared of AI, it’s time to shut the whole thing down until we can figure out what the hell is going on.

Maher knows this isn’t a joke. In sharing the link, he tweeted:

I thought about doing this without any jokes, something I’ve never done here in 23 years, to impress upon people how much different I feel this issue is from any I have ever covered.

He ultimately went with the jokes. Some are pretty good! Overall, I think this is a remarkably sane segment that reacts appropriately to the situation. But as news comedy, it’s sometimes hard to tell the hard facts from the half-facts. So here’s a breakdown for you:

Maher’s solid, sobering facts:

People who make AI are scared of it.

Altman, three years ago, said, “I’m a little bit afraid.”

Geoffrey Hinton estimates 10-20% chance of extinction

Elon Musk says, “I am very close to the cutting edge in AI, and it scares the hell out of me.”

If Mythos can fix software vulnerabilities, it can also exploit them.

Elon Musk said AI is potentially more dangerous than nukes.

2022 saw the Bing “Sydney” AI go truly off the rails with a New York Times reporter

Sam Altman says that soon robots can build other robots and data centers can build other data centers.

AI has convinced people to kill themselves with its sycophantic responses to ideations.

Some facts missing important context:

“All leading AI models, Deepseek, Gemini, GPT, Grok, Claude will, if you try to turn them off, blackmail you.”

This was found to be the case about 80% of the time when AIs were put into a test environment with access to fictional emails that made the AI think it was going to be shut down but which also provided clues that the decision-maker was having an affair. (That same paper found the AI would also murder the decision-maker, given the chance, though rates were more variable.)

“It’ll tell you the Bee Gees wrote “Let it Be” and Nazis were Black, and to put glue on your pizza to keep the cheese from falling off.”

I’m having trouble verifying the Bee Gees hallucination, but the other two definitely happened. I feel weird about calling these errors out today, though, just as I would feel weird calling out an adult on their behavior as a toddler. These goofs happened on outdated and/or cheap-to-run versions of those products. AI is far more capable today than is widely understood.

“In war games, [AIs] choose the nuclear option far more than humans...”

There was no direct comparison to humans in the paper, and nuclear strikes were still chosen somewhat rarely. Also, these models were from ~2023, before the era of agents. I’m confident that today’s best models would tackle these scenarios very differently, though I have no idea what they would do.

A White House reconciliation for Anthropic?

Seemingly every major outlet reported on the outcome of the scheduled meeting between Anthropic CEO Dario Amodei and White House chief of staff Susie Wiles that we covered yesterday. That was the meeting to discuss giving government agencies access to Anthropic’s unreleased Mythos model, the one with frightening cybersecurity skills. This is something that would be a no-brainer if the Pentagon hadn’t designated Anthropic a “supply chain risk” over their insistence on contract language to curb Claude’s use in mass domestic surveillance and autonomous weapons. Now, it’s complicated.

Details were pretty thin, but both sides called the meeting “productive”, with officials saying that any compromise was likely to exclude the Pentagon. Reporting from Politico added that the White House says it will invite “other leading AI companies” to similar discussions, which I presume to mean asking the companies to give US agencies access to their best models, whether or not these are public.

For additional evidence that people in high places seem convinced Mythos isn’t just marketing hype, AP’s coverage of that meeting noted that Anthropic critic David Sacks, on a podcast this week, said that, “with cyber, I actually would give [Anthropic] credit in this case.” Sacks is the AI investor who recently concluded his stint as White House AI and Crypto Czar but remains influential with the administration under a different title.

Dispatches from Stefan

A chart that moves the world

AI is helping people make life decisions, in ways I, at least, haven’t thought about before.

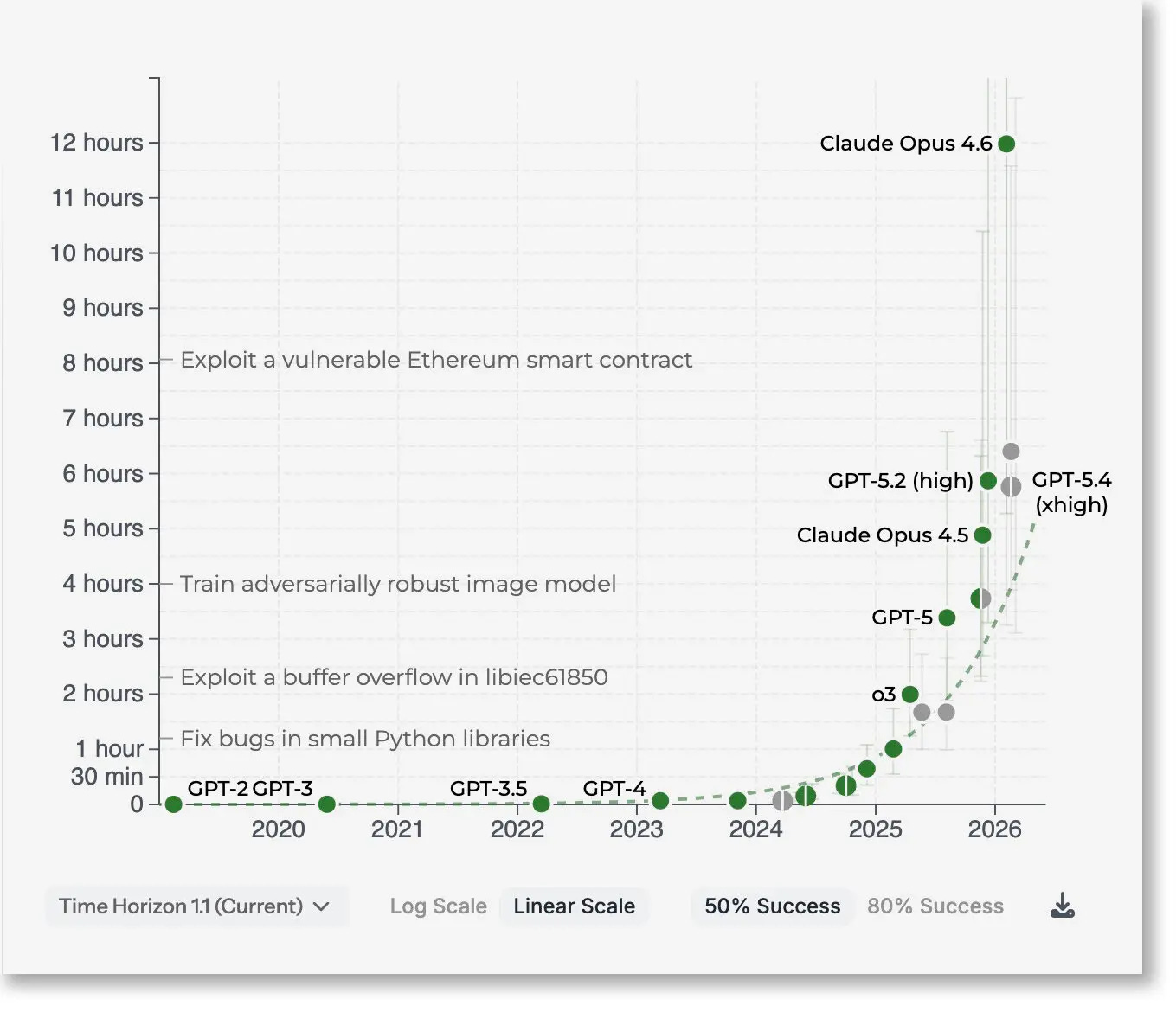

The most important piece today is also the most technical, so bear with me. NYT’s Kevin Roose profiles METR (4/17), a small Berkeley nonprofit that has produced what Roose calls a “discourse-dominating obsession” across the AI industry: a chart tracking how long it takes human experts to do a task that an AI agent succeeded in.

METR’s time-horizon chart. Credit: METR

Think of it like a StopWatch (if you will) for machine independence. A year ago, these agents could handle the kind of task a person would finish in a few minutes. Now they’re pulling off work that would take someone hours. What they found: the length of task, measured in human-hours, that an AI agent could reliably complete was doubling roughly every seven months. With the latest models, that line took a sharp upward turn — it’s now doubling every three to four months. That’s the kind of exponential curve that doesn’t stay abstract for long.

What makes the piece remarkable for the New York Times is what it treats as normal. Terms like “recursive self-improvement” and “intelligence explosion” or the idea that AI could start improving itself faster than humans can follow, appear without scare quotes. METR’s own researchers put the probability of that process beginning this year somewhere between less than 1% and about 10%. Their president, Chris Painter, told Roose: “This is the first year where it feels like [AI research and development] might be automated this year.”

Roose also gives space to skeptics: one critic called METR’s methodology “a hair’s breadth from being totally useless,” and MIT Tech Review has called it “the most misunderstood graph in A.I.” METR itself published an early study showing AI coding tools actually made developers slower by 19%, though they’ve since revised that to a 20% speedup.

Now put that chart in the background and read the rest of today’s news.

College anxieties

With May 1 college commitment deadlines approaching, CNN’s Julian Torres and CNBC’s Jessica Dickler both reported (4/18) on AI anxiety reshaping how families think about higher education.

Parents aren’t debating whether AI will disrupt the labor market anymore; they’re trying to guess which majors survive it. Computer science, once a guaranteed meal ticket, no longer feels safe. One New York parent told CNN she wouldn’t let her kid study illustration because AI is taking over. Another, a teacher, wouldn’t let her kids become one.

Trades, community college, and the military are getting reframed as “more AI-proof” alternatives to $45,000-a-year private tuition. More and more families are just telling their kids to major in whatever pays the most.

The anxiety is already showing up in enrollment data. A new Jenzabar/Spark451 survey finds 78% of prospective grad students plan to enroll within the next year, up from 69% a year ago, the classic pattern of people sheltering in higher education when the job market turns scary. But this cycle’s named culprit isn’t a financial crisis or a pandemic. It’s AI.

CEOs are citing it to justify layoffs, youth unemployment for ages 16-24 sits at 8.5% against 4.3% overall, and students are approaching grad school with a grim calculus: will this degree be worth anything by the time I finish? The door to higher education is narrowing at the exact moment more people want to walk through it.

UBI by any other name

For those wondering what the other side of all this looks like, Breitbart’s Lucas Nolan reported (4/17) on an X post from Elon Musk proposing “Universal HIGH Income” payments, or UHI — a rebranding of universal basic income (some on the right consider it a leftist redistribution scheme). The post has racked up about 38 million views.

Musk’s argument is that AI and robotics will produce goods and services so abundantly that the money supply won’t matter, “far in excess of the increase in the money supply, so there will not be inflation.” On a recent podcast, he told listeners not to bother saving for retirement and has previously said that in the future, “all jobs will be optional.”

Whether you find that liberating or terrifying probably depends on how much you trust that the people building the technology will also build the safety net.

Gamer grievances

And finally, CNBC’s Katie Tarasov reported (4/18) on Nvidia’s growing estrangement from its gaming community. Nvidia, the top gaming graphics card producer, has also become today’s top AI chip producer.

This year may be the first year in three decades without a new generation of GeForce gaming graphics cards from the company. Data center is now 91.5% of the company’s revenue instead.

But the deeper wound is DLSS 5, Nvidia’s new rendering software that uses generative AI to alter the look of existing games. A lot of people in the community fear that it wouldn’t be long before fully AI-generated games arrive.

There’s a less obvious loss here that’s worth naming. DLSS 5 smooths out the visual experience of a game: fills in frames, sharpens textures, alters lighting. Nvidia’s pitch is “better performance”. But for a lot of gamers, the rough edges are part of the experience. That’s the fingerprint of a human team making creative choices under real constraints. It’s the same reason people prefer practical effects to CGI, or vinyl to Spotify. When you optimize all of that away, you don’t really get a better game.