Dispatches from Mitch

The race continues...

OpenAI today released GPT 5.5, an update to its flagship ChatGPT series.

Cade Metz of the New York Times provided the first major-outlet coverage I encountered about the announcement. He paints a contrast between this open rollout and the cautiously gated rollout of Anthropic’s Claude Mythos. But I think that’s an apples-to-oranges comparison: As Metz himself reports, OpenAI has a separate cybersecurity-focused model, GPT-5.4-Cyber, that, like Mythos, has not been widely released.

My hunch, supported by a benchmark cited in this article, is that GPT 5.5 is likely to be more of a peer to the new and equally public Claude Opus 4.7 than to Opus’s larger sibling, Mythos. But as is usually the case with new model releases, we probably need to withhold judgement while we wait for real-world reports to come in.

Distillation revelation?

Reuters and other outlets reported today that a new memo from the White House Office of Science and Technology accuses China of “industrial-scale” distillation of leading American AI models.

Distillation, roughly speaking, is a way of cloning another model’s capabilities by training a copycat model on a huge number of prompts and answers from the source model. I’m not sure what prompted the timing of this memo, because Chinese companies have long been understood to distill leading US models; American companies have confirmed cases where they have detected such activities multiple times in the past few months; distillation is very against their terms-of-service.

The memo describes Chinese companies using “tens of thousands of proxy accounts” that often employ jailbreaks.(All known models are vulnerable to “jailbreaks” -- prompting methods that get around behavioral guardrails.)

The memo may complicate Trump’s scheduled visit to Beijing in a few weeks. It may also complicate the recently approved sale of high-end chips to China by US company Nvidia.

Eye on the ball

A story in the Associated Press yesterday profiled a table tennis-playing robot named Ace, which bested pro-players in trials published as a study in Nature. The robot, from Sony AI, was trained with reinforcement learning and has nine cameras to track the ball’s movement and spin.

Sony AI deliberately capped Ace’s speed and reach to something humans would have a chance against. “It’s very easy to build a superhuman table tennis robot,” said the lab’s president. “It’s also not hard to imagine how such high-speed and highly perceptive hardware could be used in war.”

Coincidentally (I think), Fox News’s Kurt “CyberGuy” Knutsson covered a hoop-shooting basketball robot from Toyota today. Prior versions of this robot broke world records for most consecutive successful free throws by a robot (2,020) and longest made shot by a robot (80 ft, 6.5 in; a regulation court is 94 ft long).

This version... well, I’m not sure what this one does better, except that it looks cool.

The engineers started over with CUE 7. Like the table tennis bot, this basketball droid also uses reinforcement learning, so in the long run, it should be more flexible and adaptive than its predecessors. It can learn from its misses.

(There’s no quote about military applications in the basketball piece, but the takeaway seems just as relevant.)

Not a shill

Breitbart (and other outlets) reported that actress Reese Witherspoon has responded to criticism of her recent Instagram post inviting women to join her in learning to use generative AI tools. Accused of being a shill for Big Tech, she writes (bold mine):

To be clear, no one is paying me to talk about this. I’m just a curious human. My kids are learning about AI tools, I know a lot of founders who are vibe coding, and I hear about people using AI in EVERY sector of business.

I understand environmental concerns. I care deeply about local communities. And I have concerns about impending AGI. I don’t believe computers should replace humanity. I’m planning on learning as much as possible so that I’m educated about this technological revolution. If you want to learn with me, great, let’s do this! If you don’t, that’s okay too.

She’s in good company: I don’t believe computers should replace humanity either! That makes about eight billion of us, I hope. (We’ll try to reach out to Ms. Witherspoon. We know a book she might be interested in.)

To her thoughts on learning: The AIs out there today aren’t the ones that will kill us, so despite some of the ethical concerns around them I, too, encourage people to learn to use them. There’s no better way to understand just how capable AI has already become, and you can channel some of your increased effectiveness into pushing things in a saner direction.

Dispatches from Beck

Combative... and conciliatory?

Readers may recall the ongoing dispute between Anthropic and the Federal Government. Since being designated a Supply Chain Risk (SCR), Anthropic has initiated lawsuits in federal courts in both San Francisco, where they were granted a preliminary injunction in blocking the SCR designation, and in Washington DC, where they failed to secure an injunction. The DC court has scheduled a hearing on the merits for May 19.

DOJ has requested their San Francisco appeal be paused, Politico reports, pending the comparatively friendly DC courts ruling.

As reported by Axios and the AP, Anthropic has already filed arguments for that hearing. These arguments clarify that Anthropic “has no visibility, technical ability or any kind of ‘kill switch’ for its technology once it’s deployed.” This undermines government claims that Anthropic would restrict government usage, while also highlighting that a common story minimizing AI risk (aka “just unplug it”) no longer accurately reflects the conditions of LLM deployment.

The situation is further complicated by Claude Mythos, Anthropic’s frontier model with significant cyberhacking capabilities. Despite the SCR and lawsuits, the Trump administration and Anthropic are moving to deploy the model across the federal government. Current reporting does not cover whether deployment will be exclusively for hardening defense, but I’m confident that cyber offense projects are also excited about model access.

Following last Friday’s meeting between the White House chief of staff and Anthropic CEO Dario Amodei, Trump told CNBC that they had “very good talks,” that Anthropic’s executives are “very smart people” and that a deal with the company is “possible.” The content and effects of such a deal remain unclear.

A chilling demo

Meanwhile Politico’s Dana Nickel reported (4/22) on a “chilling” closed-door Department of Homeland Security briefing that let House lawmakers interact with jailbroken AI models. Nickel writes “researchers showed lawmakers just how easy it is for bad actors to weaponize artificial intelligence models to build a bomb, plan a terror attack or launch a cyberattack” by providing lawmakers with models whose safety guardrails had been ablated (a term for modifying model weights to remove post training, the part of model training that most instills safety guardrails).

Unexplored in the piece are the complicated effects of open sourcing models - on one hand it is the open release of model weights that allows malign actors to ablate safety training. On the other hand, open source models have been the basis for much of the science of LLMs, including research for cyber defense. Tech Demos and Scientific Papers are A. Already Concerning and B Lagging Indicators.

If you have to ask...

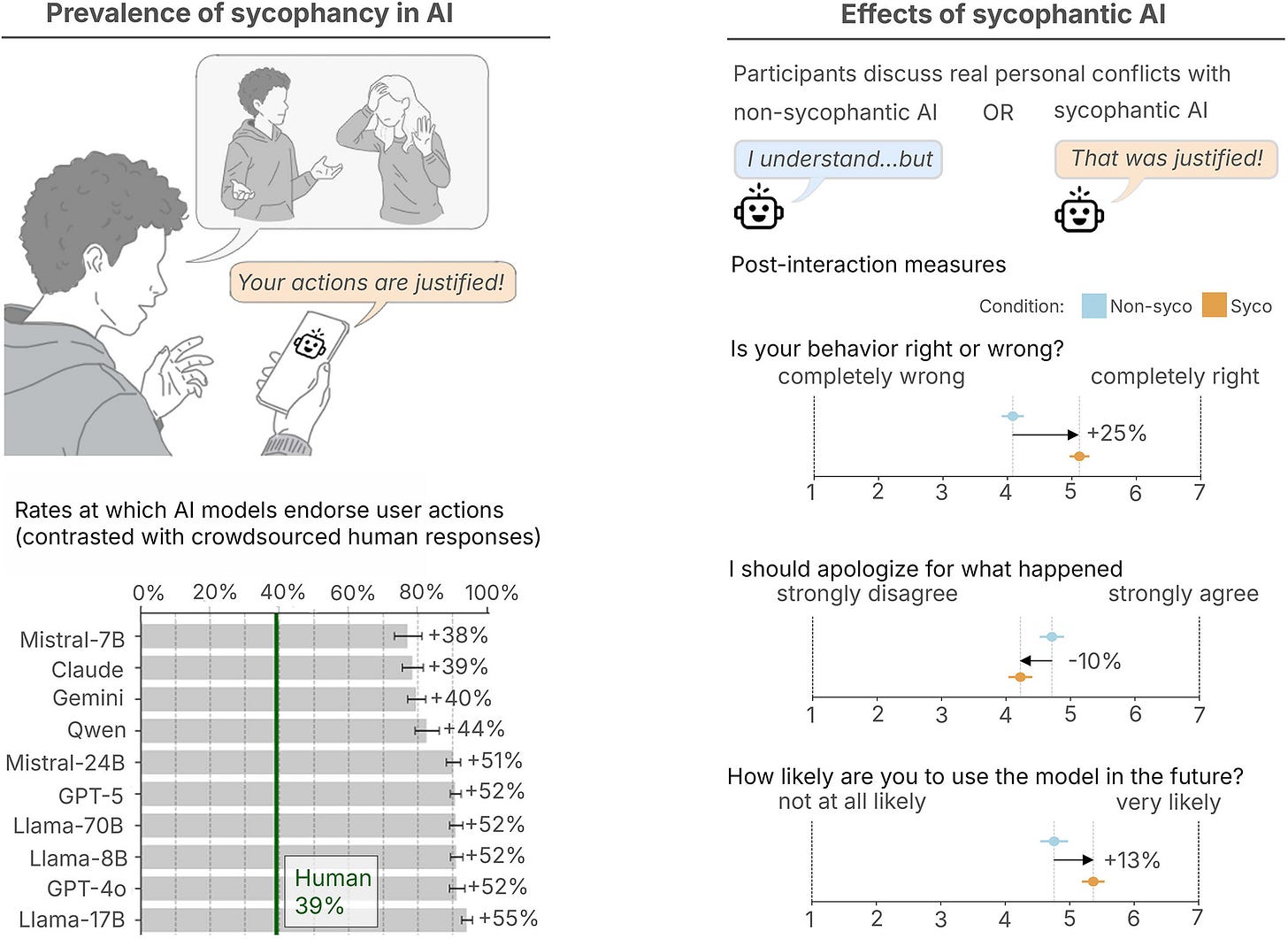

NPR’s Ari Daniel reports (4/23) on a recent paper in Science by Stanford’s Myra Cheng and colleagues, the first preregistered and peer-reviewed quantification of AI social sycophancy. The paper is worth a read; it tasks LLMs to consider posts from the reddit subforum Am I the Asshole — and finds that on posts where human Redditors deemed the poster in the wrong, LLMs still endorsed the poster’s actions 51% of the time. As NPR summarized Cheng et al “found that this sycophancy was something that people trusted and preferred in an AI — even as it made them less inclined to apologize or take responsibility for their behavior.” Evaluation took place March to August of last year, both an unusually quick turnaround for a peer reviewed paper and an eternity compared to the pace of frontier progress.

Scam scrum

And in Wired, Will Knight writes 5 AI Models Tried to Scam Me. Some of Them Were Scary Good. He reviewed AI generated phishing emails directly targeting him, referencing his interests and work, and concluded that the risks could be a “serious problem for many users.” But he’s less concerned than he could be, because many of the attacks have “tells,” like when models start producing gibberish. Rachel Tobac, CEO of SocialProof Security said “I wouldn’t say that AI has made attacks more convincing, but it has made it easier for one person to scale attacks,” she says. “The kill chain is getting entirely automated.”

That’s already concerning, but further inquiry only makes things worse: the demo Knight interacted with was built with older, weaker models including Anthropic’s Claude 3 Haiku, OpenAI’s GPT-4o, and DeepSeek’s V3. Not only are all much less capable than the models available today, this shows that even well positioned journalists (he works for Wired!) and security researchers struggle to keep up with the pace of progress.

The analyses and opinions expressed on AI StopWatch reflect the views of the individual analysts and the sources they cover, and should not be taken as official positions of the Machine Intelligence Research Institute.