Dispatches from Mitch

We’ve done it in the past

Senator Bernie Sanders held a forum in front of the US Capitol yesterday on “The Existential Threat of AI and the Need for International Cooperation.”

He was joined in person by Max Tegmark of MIT and David Krueger from the University of Montreal. Videoconferencing in from China were Dr. Zeng Yi from the Beijing Institute of AI Safety and Governance and Chinese Xue Lan from Tsinghua University.

Sanders opened with a litany of experts’ warnings, then added:

One might think that given the very real threat to humanity, countries might come together to regulate this technology through an international treaty, like we did with nuclear weapons at the height of the Cold War. Has that happened? No, it has not. I’m a member of the United States Senate, and I can tell you unequivocally that there has been no serious discussion about this existential threat.

Tegmark and Krueger both shared some eye-opening remarks. Here’s Krueger on timelines:

[0:39:05] Yeah, I want to address this and also your question about where this technology is going to be in 10 or 20 years.

I think we should be asking where this technology is going to be in one or two years.

I think right now, right now, the leading AI companies are using AI to write almost all of their code and they’re now using AI to try and automate the research and development of more advanced AI systems in order to reach super intelligence — so that’s something vastly smarter than humans — within a couple years.

Now, I don’t know if they’re going to succeed. I think it might take a few more years, like five, let’s say. I would be surprised if it takes 20.

But I will say that having been in this field for about a dozen years now, since the beginning of the deep learning revolution, when this all kind of kicked off, progress has consistently exceeded expectations.

There have always been, the entire time I’ve been in the field, people saying, oh yeah, sure this thing the AI just did, that’s impressive, but it’s about to hit a wall. It’s never gonna be able to do this, it’s never gonna be able to do that.

Half the time, that thing that people say it can’t do gets solved within a year. The other half the time, it can already do it, and they just don’t know. So that’s the rate of progress we’re talking about.

The Chinese scientists have a different flavor to their talking points than we’re used to hearing in the States, but their message still comes through. Zeng talks about AI being a “mirror,” reflecting our society’s tendencies for both good and evil. This is dangerous, he says, because we don’t know how to get the evil parts out. He says we might not be able to make provably 100 percent safe AI, but we should at least “maximize the level of safety before we move up.”

Xue is concerned about the “pacing” and the “geopolitical situation” that makes it hard for countries to come together against AI risks. Citing a concern perhaps more salient in China, where AI companies are aggressively regulated, he says he’d also like for government and companies to “stop playing the game of cat and mouse” and instead work together to address risk.

Xue also says we should be frightened of a world where only a few countries have powerful AI “but the rest of the world is impoverished with nothing.”

The event is a little more than an hour long, and worth a watch or a listen. None of the participants are in perfect agreement with each other about the nature of the threat, but all agree that it is serious, pressing, and tractable — if nations come together to deal with it.

Sanders’s closing remarks:

[W]hat I observed is what we were talking about tonight is that we have a global crisis dealing with the survival of the human race. And I go to work here in the morning, and I expect people to be talking about the most important issues facing humanity, and I don’t hear it.

Now, the good news is that for a variety of reasons and in a variety of ways, whether it’s opposition to data centers or whatever, people are beginning to stand up and say, you know what, we want a say in this process. We don’t want to let just the wealthiest people in the world run over us with possibly incredibly disastrous results.

So I think we are, I think, Max, you’re right, I think more and more people are becoming sensitive to this issue. And what we’ve got to do is take this issue all over the world and bring countries together. We’ve done it in the past with regard to nuclear weapons. We’ve done it in the past regarding working together on pandemics. We can do it on this.

Mischief managed?

Following up on an earlier story this week, it looks like my wildly speculative theory about OpenAI’s gremlin problem was backwards: They weren’t trying to suppress mischievous behavior by banning talk of whimsical creatures, but had instead trained for a playfully nerdy persona and got excessive creature talk as a side effect.

That’s according to a blog post the company put out in response to viral interest in GPT-5.5’s need for specific injunctions against talking about goblins, gremlins, trolls, pigeons, and other creatures. (Here’s some BBC coverage.)

Chat-GPT’s “Nerdy” personality option was retired in March, but 5.5’s training had started before the training settings overly rewarding creature talk were adjusted. The blog post hints that creature-contaminated data from the earlier model had also likely been used to help train the new one, in a cycle that might have continued into future models if not stopped.

OpenAI and the BBC both framed this incident as a quirky detective story from responsible engineers, but this is a cut-and-dried case of goal misgeneralization: an unsolved technical problem in machine learning where a training signal meant to reward one behavior rewards a different behavior that helps the model score just as well or better.

If you know the true 1908 story of the dog in Paris found to have been pushing children into the Seine, you already understand goal misgeneralization. As the story goes, the dog once rescued a struggling child and was rewarded by the child’s father with a succulent beefsteak. Before long, “Hardly a day passed but that some unfortunate infant was brought safely to the bank by the dog after an involuntary bath.”

Goal misgeneralization can sometimes be cute now, but in a model clever enough to chase its strange off-target goals over humanity’s resistance, it would be a death sentence for us all. No one should be pushing towards superhuman AIs with methods subject to this failure mode.

Dispatch from Beck

AI spending go zoom!

The Wall Street Journal reports today that GDP grew by an annualized 2% in the first quarter of this year, led by AI, while consumer sentiment remains weak. AI-related spending on datacenters, chips, powerplants, and more from just the top four firms rose to $130 billion in the first quarter of the year, with projections rising to over $700 billion for the calendar year, reports Reuters.

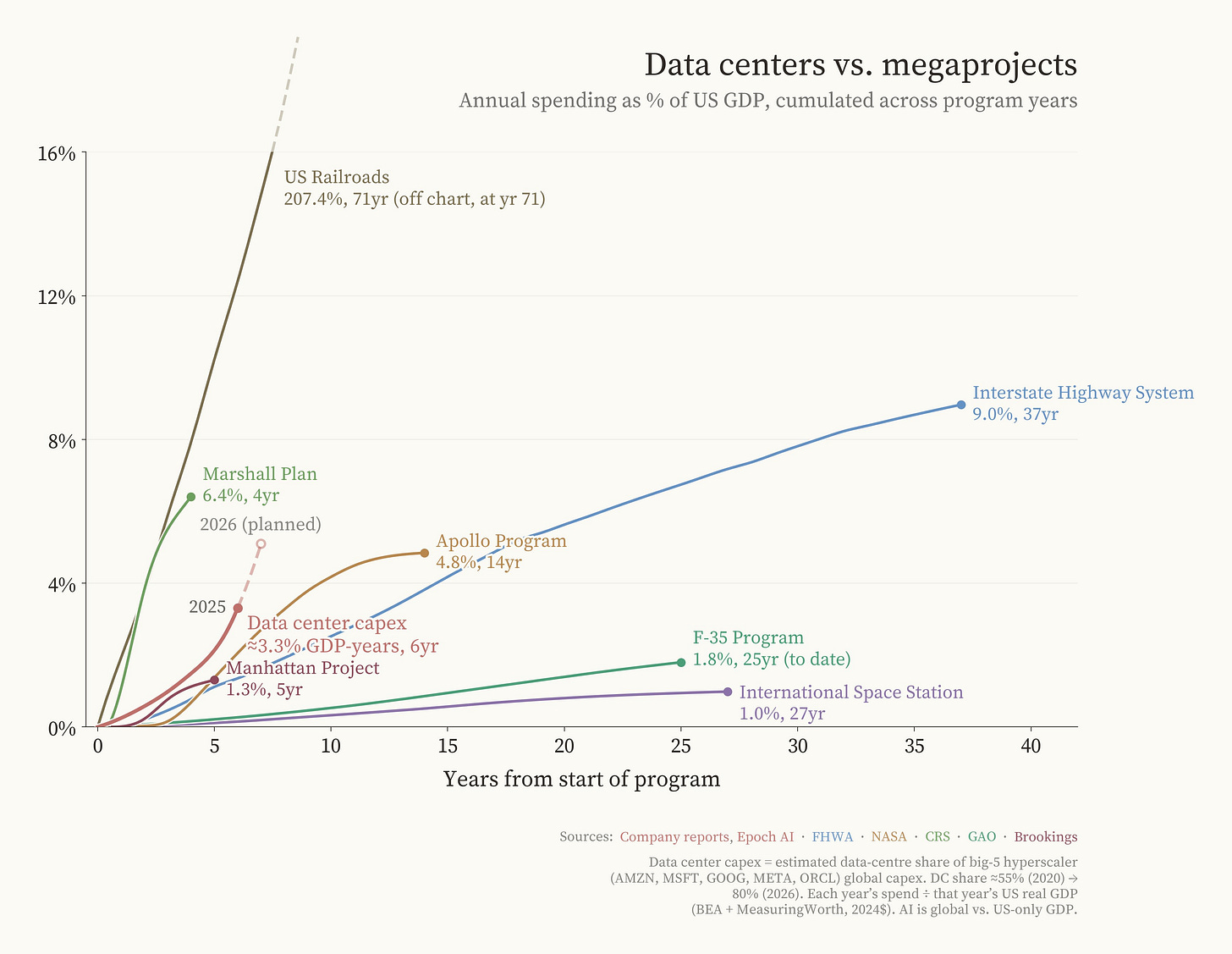

These amounts are staggering; just first quarter expenditures are three times the total inflation-adjusted cost of the Manhattan Project (NYT). The 700 billion in annual projected expenditures equate to ~2% of US GDP. A small number of companies are outspending what the government spent on the Apollo program, both in inflation-adjusted total dollars and in rate of expenditure as a percent of US GDP.

For years, AI skeptics have asked: if AI is going to matter, where is it in the economic data? These days, it’s right there, staring us in the face.

Dispatches from Donald

White House blocks Anthropic plan to expand Mythos access

The WSJ’s Robert McMillan and Amrith Ramkumar report that the White House is blocking a proposal by Anthropic to allow an additional 70 or so organizations to access Claude Mythos. The object of such expansion is to allow their partners to harden their systems against AI-driven cyberattacks, which Anthropic believes will be made easier and more effective by the development of models like Mythos.

The White House, however, is concerned that Anthropic lacks the necessary computing power to serve these additional groups while maintaining service to the U.S. government. Because Mythos and models with capabilities like Mythos are regarded by some officials as a national security threat, the administration has naturally prioritized its own needs. The situation is also complicated by the federal government’s earlier decision to distance itself from Anthropic and label the company a supply-chain risk.

Anthropic has no plans to publicly release Mythos, but McMillan and Ramkumar note that some unauthorized users have gotten access anyway (My colleague Mitch originally covered that story here).

Elon Musk v. Altman et al, Day 2

In the second day of Elon Musk’s lawsuit against OpenAI, the focus was on early emails exchanged between Musk and other cofounders. Though Musk made references to AI safety and catastrophic risks earlier in the trial (see here for StopWatch’s coverage of the first day), the second day was primarily about profit margins.

The Guardian’s Dara Kerr and Nick Robins-Early elaborated on comments about AI safety made on Tuesday: Musk claimed to have founded OpenAI in order to provide a counterweight to Google’s Larry Page, whose own AI work might otherwise “doom humanity.” CNN likewise referred to earlier comments, reporting that Musk was concerned that “the technology could be used to harm humans, perhaps even deeply.”

Musk claims that he wanted to prevent OpenAI from becoming first and foremost a for-profit entity; OpenAI’s lawyers claim that he was more concerned with having control over OpenAI, for-profit or not.

Beijing bets on robotics

The Wall Street Journal’s The Journal podcast (Ryan Knutson and Yoko Kubota) reports on the development of humanoid robots in China. The Chinese government is prioritizing the field of “embodied AI,” from household robots that perform domestic chores to medical robots that can perform dental surgery. The U.S., in comparison, seems to be far behind in Knutson’s estimation. It has an edge in large language models, but not robots. The Trump administration is aware of the gap, however, and is attempting to address it.

According to Kubota, robots can solve a number of issues for China. The most pressing problem is the demographic crisis posed by their aging population. Human-shaped robots would be able to navigate spaces that weren’t designed with robots in mind. Robots could also be useful in police work, the military, and other domains.

Italian regulator urges Brussels to investigate Google’s AI Search

Reuters’ Elvira Pollina reports that AGCOM, Italy’s national regulatory agency for the communications industry, has recommended that the European Commission investigate Google. It is concerned that Google’s AI-powered search features harm news publishers, following a complaint from FIEG, the Italian newspaper publishers’ federation.

According to the FIEG, AI-generated search summaries don’t just divert users away from original news sources, which threatens the economic sustainability of publishers. They can also spread misinformation through hallucinations (incorrect information falsely presented by the AI as correct). Besides making its request for assessment from the European Commission, AGCOM also intends to bring together Google and other platforms and publishers into a permanent dialogue on artificial intelligence, copyright, and maintaining a diversity of viewpoints in the media.

Coverage of OpenAI’s new image misses bigger picture

USA Today’s Greta Cross rounded up reactions to OpenAI’s ChatGPT Images 2.0, which was launched on April 21 with web search and a “thinking” model that takes minutes to prepare before it generates an image. Each prompt can produce up to eight outputs, with more accurate text, photos in varying aspect ratios, and iconography.

Cross evaluates the model largely on whether it can achieve certain aesthetic metrics, like giving people the right number of fingers. Risks merit very little concern in comparison: Kathryn Coduto, a media science professor, tells USA Today that better image generators increase the risk of deepfakes.

However, the danger as described is limited to revenge porn, or the distribution of sexually explicit videos of a person without their consent (or, in this case, without even having happened). Neither Coduto nor Cross note the growing use of deepfakes to defraud people (especially the elderly), create political controversies, and spread false narratives about ongoing conflicts.

Comedian David Cross: Deepfakes “terrifying” without regulation

Fox News Digital’s promo interview with comedian David Cross covers many subjects, including AI. Deepfakes in particular are “f------ terrifying,” Cross says.

What’s most interesting to me: Cross says that he thinks stand-up comedy is safe from automation (along with dance), but quickly admits that he isn’t actually sure. “Who knows where the f--- this is going,” he says.

His final words on the subject: “This is the beginning. We’re in the very beginning of it, so that part is terrifying, especially if there’s no regulation. And I think about my daughter’s generation and like, what the f--- are they going to have to deal with?”

The analyses and opinions expressed on AI StopWatch reflect the views of the individual analysts and the sources they cover, and should not be taken as official positions of the Machine Intelligence Research Institute.