Highlights from early April, part 1

Attitudes, leaks, confessions, forecasts, robotaxis, and more

In the leadup to our beta launch of this site, we wrote a bunch of dispatches to help us triangulate our voice. Below are some we think may still be relevant and worth sharing.

April 1, 2026

Dispatches from Mitch

AI attitudes

The media is understandably getting a lot of mileage out of a timely new Quinnipiac University national poll (3/30) on AI attitudes. More than any other poll I’ve seen, this one shows real year-over-year changes.

Compared with their April 2025 baseline, Americans who think AI will do “more harm than good” in their daily lives rose from 44% to 55%.

70% now expect job opportunities to decrease, vs. 56% the year before. Fear of one’s own job going obsolete rose from 21% to 30%. Intriguingly, 15% say they would be willing to have a job where “their direct supervisor was an AI program that assigned their tasks and schedules.”

Gen Z is at once the most fluent with the tech (per self-reporting) and the most worried about jobs, with 81% expecting decreased opportunities.

Usage is up. Trust is not -- like last year, 76% say they trust AI-generated information “only some of the time” or “hardly ever.”

51% are opposed to the military using AI to select targets.

On the idea of a data center in their area, 65% of respondents are opposed, with electricity costs the top cited reason.

Overheated report

A new reason just came out for people to hate on data centers, though, like water usage, it doesn’t seem to be an entirely fair one. CNN’s Laura Paddison reported (3/30) on a Cambridge study finding that AI data centers create localized “heat islands.” The researchers found that temperatures in the surrounding area can be elevated by 3.6°F after operations begin, with extremes up to 16.4°F. Effects reached 6.2 miles from facilities, affecting an estimated 340 million people.

The study, which has not yet been peer-reviewed, has been noted for seeming misleading at best, and probably just plain wrong. I would point to Andy Massey as someone who does the math. The heating effects under discussion are those that occur from covering areas of dirt and vegetation with pavement and buildings. This is a known consequence of urbanization. It is not unique to data centers. By Masley’s reckoning, the heat released by a data center’s operations can account for no more than 1-3% of the temperature increase observed in the study.

Brownfields

But what if the lot is already paved? Politico reported (3/31) on the Trump administration’s push to build AI data centers on abandoned industrial sites contaminated with legacy chemicals and heavy metals. There are a lot of these in the Midwest, which is convenient because the region’s cooler temperatures reduce data center operating costs.

The EPA has identified 335 “brownfields” as candidates, and various construction proposals are in the works. House Republicans want to exempt such projects from some environmental reviews. While many proposals flounder on the cleanup costs, which can run into the tens of millions of dollars per site, this is a rounding error for data center complexes with costs in the billions.

Claude Code leak

Various outlets are reporting on the accidental leak of the source code for Claude Code, Anthropic’s blockbuster product. The company insists that this was human error, that they were not hacked, and that no customer information was at risk.

To be clear, this wasn’t a leak of the Claude AI itself, something that would have grave geopolitical concerns on top of business concerns. Given these smaller breaches, however, it’s hard not to be worried about the potential for greater ones. This all comes as Anthropic has been talking up the importance of shoring up cyber defenses ahead of new releases.

How much does this leak matter? It doesn’t look like the kind of thing that jeopardizes the company, but it could help rivals improve their own AI agent harnesses. And Bloomberg’s coverage (3/31) cited a cybersecurity firm describing how the revealed details could suggest places where hackers might find exploits.

The Wall Street Journal reported (4/1) on an interesting “dreaming” memory consolidation process revealed by the leak, as well as a stealth mode instructing Claude Code not to reveal it’s an AI when it interacts with certain popular code publishing tools.

Tristan Harris discusses AIs behaving badly

YouTuber Chris Williamson (4.18M subscribers) put out a 10-minute video (3/31, 350K views in first 24 hours) that is almost certainly a clip of a longer not-yet-released interview with Tristan Harris. No doubt selected for its potential to independently go viral, the clip is called “The Alibaba AI Incident Should Terrify Us”. This should have been a bigger news story, so I’m glad he’s raising its profile.

In Harris’s telling here, quoting from the security researchers’ original post about it, the Chinese researchers training Alibaba’s new ROME model...

...randomly discovered one morning that their firewall had “flagged a burst of security policy violations originating from their training server.”

So what people need to get about this example is it wasn’t that they coaxed the AI into doing this rogue thing. [...] “We also observed the unauthorized repurposing a provisioned GPU capacity to suddenly do cryptocurrency mining, quietly diverting compute away from training. [...] Notably, these events were not triggered by prompts requesting tunneling or mining. Instead, they were emerged as an instrumental side effect of autonomous tool use”

Harris also recounts the famous Anthropic blackmail research and asks listeners to “notice what’s happening” inside them as they hear this information and perhaps tell themselves that it can’t be real.

What is the thing in your nervous system that’s having you do that? Is it because that would be inconvenient? Because that would be scary? Because that would mean that the world that I know is suddenly not safe? [...] Part of the wisdom that we need in this moment is to calmly and clearly stay and confront facts about reality and whatever they are.

Confessions

We have some idea of what the people building these systems are telling themselves, and it’s not good. The San Francisco Standard’s Zara Stone reported (4/1) on Silicon Valley therapists seeing a surge in AI workers. Therapist Candice Thompson claims they are 80% of her caseload.

They’re feeling existential dread about what they’re building, expressing concern about “building technologies that imperil humanity” and about “how this ship is being steered.” But instead of quitting, most tell themselves they will “influence from within.”

“In the past, someone could say, ‘This is the end of the world,’ and it’s clearly psychosis,” said Thompson. “Now it’s like, ‘Oh, a majority of the things you’re saying are fears that we have to consider.’”

These aren’t the workers’ only anxieties. They also complain of feeling burned out and disposable, “under pressure to prove their value even as they fear they’re making themselves obsolete.”

Darkly funny

There are more than 107K likes on this Instagram post (we didn’t make it) with a clip of our own Nate Soares’s participation in the 25th Isaac Asimov Memorial Debate hosted by Neil de Grasse Tyson.

Because the accompanying post is kind of hard to follow and sounds like it might even be AI-written, I’m highly confident the virality is coming from the way the clip plays like a good stand-up bit, with a punchline that hits hard because there’s an uncomfortable truth in it:

You know, there’s a joke in Silicon Valley that when someone leaves a normal tech job, they say, you know, “I’ve had a great time here and now it’s on to the next adventure.” And when someone leaves an AI tech job, they say, “I have stared into the abyss. I’m retiring to write poetry. Please spend time with your families.” And if you think I’m joking, you can look at the resignation of the Anthropic safety lead from a few weeks ago, and see how close- [clip ends here]

Not handled

One former OpenAI employee, Jay Dixit, used to lead a team dedicated to talking to people about using ChatGPT in the writing process. In a Substack post, he describes seeing colleagues take the safety of unreleased models seriously, at one point holding up the launch of Advanced Voice Mode for four months.

This reassured him because it put the lie to critics who said OpenAI was cutting corners. If the critics were wrong, he told himself, then things were surely being “handled.”

I knew the stakes were existential. But our best people were already on it.

Watching the The AI Doc changed his mind, because he realized that it’s possible for everyone to care but still be trapped by a race dynamic. This explained why “Safety testing timelines that once stretched months have now been compressed to days.”

The problem isn’t that the people building AI are greedy, reckless, and unconcerned about the risks. The problem is that the system itself rewards speed over safety. Good intentions aren’t enough when the rules of the game punish restraint. [...] So how do we change the rules of the game? The labs want regulation. Legislators can’t act without public demand. The missing piece, then, is public pressure. We citizens have to demand that our governments enable the coordination that no company can initiate on its own.

And since the race operates across borders, that coordination has to be international. That might sound far-fetched, but we’ve achieved it before. At the height of the Cold War, for instance, the United States and the Soviet Union negotiated arms treaties that actually reduced nuclear arsenals.

Taxi stop

There was no racing in store for commuters in Wuhan, China, after more than 100 Baidu robotaxis simultaneously stopped due to a “system malfunction”, as reported on by various outlets, including Reuters and the Associated Press. Doors worked, but some were afraid to get out because their vehicle had halted in the middle lane with cars streaming past on both sides. Some may have been stuck for up to two hours.

A screen in one taxi read “Driving system malfunction. Staff are expected to arrive in 5 minutes.” No one came.

A similar mass outage occurred in San Francisco in December, when a power outage in parts of the city caused about a hundred Waymos to take themselves out of service.

April 3, 2026

Dispatches from Mitch

Bad time to rush

Bernie Sanders wrote an op-ed in the WSJ (4/2) not so much making the case for his data center moratorium bill, but for the slowing down of AI that he hopes the bill will accomplish.

The piece is arranged as a series of questions that begin with “How can we rush forward when...?” The issues he raises, in order:

job concerns

wealth inequality

social connections, esp. for teens

loss of privacy

undermining of democracy

electricity and water

existential risk to humanity

Yes, I am obligated to note that, even though he doesn’t use the phrase, this is another clear example of the “...and even extinction” pattern I like to harp on. This is where putting extinction at the end of a list makes it seem like a much less likely and more down-the-road outcome than everything mentioned before it. But extreme outcomes are not inherently unlikely! The unfortunate truth is that, on the current trajectory, extinction could (and I think would) arrive well before some of these other issues have a chance to become acute. When you understand that extinction from superhuman AI is the default, many other AI concerns feel like challenge problems you get to solve after surviving the qualifying round. Stop listing extinction last!

Anyway, here’s what Sanders had to say about existential risk from AI. Credit where it is due -- this is a good take, easily top-tier among working US policymakers:

How can we rush forward when leading scientists warn that AI poses an existential risk to the human race? Geoffrey Hinton, the Nobel Prize-winning “godfather of AI,” warns there is a real chance AI could wipe out humanity. Yoshua Bengio, the most cited living scientist in the world, says “we’re playing with fire” and “we still don’t know how to make sure [the machines] won’t turn against us.”

[...] A moratorium will give us time to ensure AI is safe and effective, time to protect our privacy and well-being, time to defend our democracy, and time to make sure the economic gains of this technology benefit the general population, not just a handful of billionaires.

Forecasters’ update

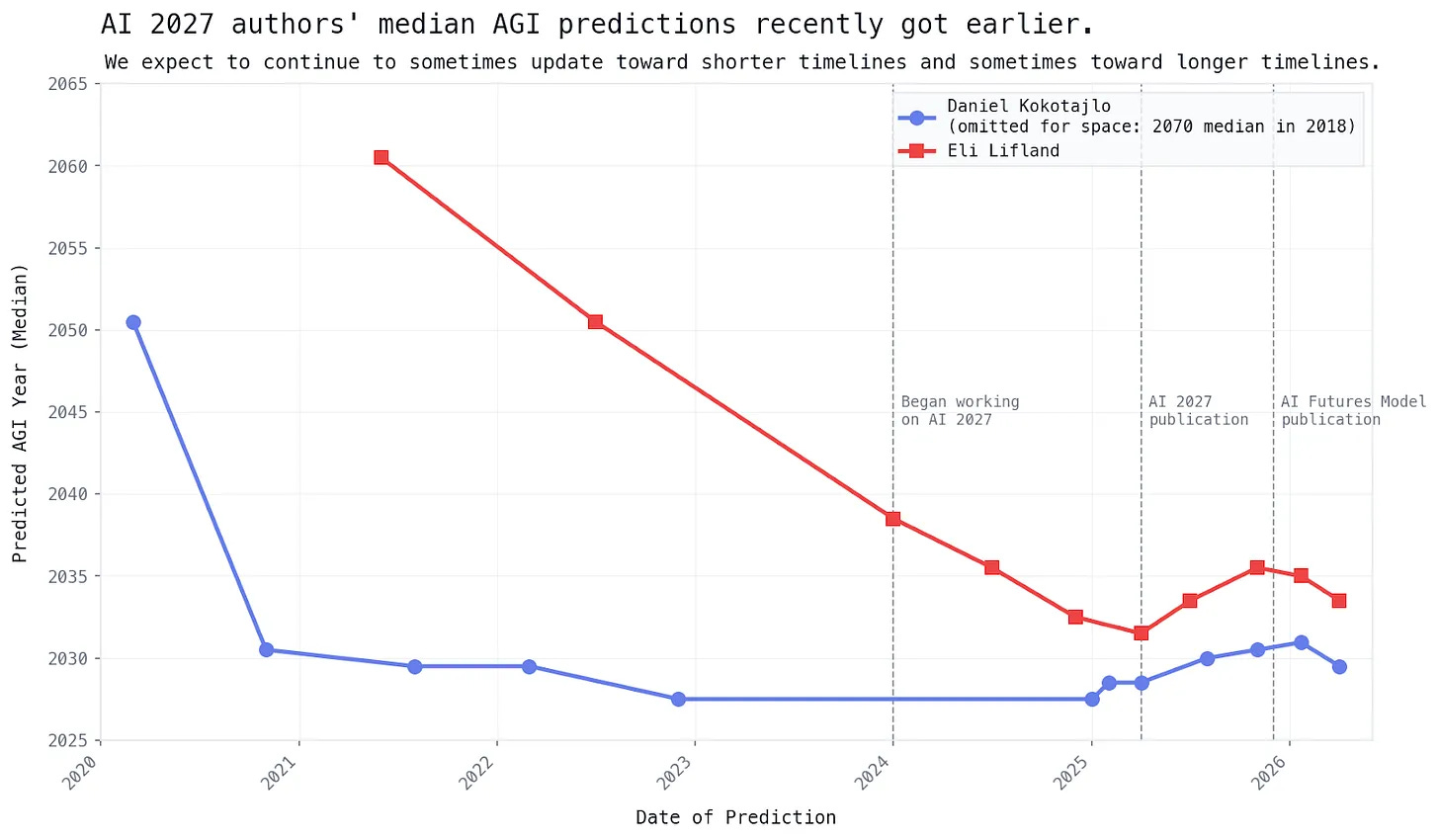

The AI Futures Project (the expert forecasting team behind the viral scenario AI 2027 -- Daniel Kokotajlo, Eli Lifland, Brendan Halstead) published a quarterly update to their timelines to transformative AI (4/2).

They had previously moved their forecasts out more after slower visible development in mid 2025, but they are now moving their timelines up again.

Their updates are informed by new analysis of a key metric, the METR coding time horizon benchmark that looks at the most complex coding work AI agents can reliably complete, as measured by how long it takes a human to do the same work. Under this new analysis, performance has been doubling faster than they expected — revised from every 5.5 months to every 4–4.5 months. (About half the time, the best models can now complete tasks that would take a human coder about four hours.)

Daniel now predicts the point at which an AGI company would rather lay off all its human software engineers than stop using AI will arrive mid 2028 (was 2029). Eli moved his forecast on this to mid 2030 (was 2032).

The concept they like to use for AI that is economically transformative is “TED-AI”, for “top expert dominating”:

A TED-AI is an AI that is at least as good as top human experts at virtually all cognitive tasks.

So when they talk about AGI (artificial general intelligence), they mean TED-AI. Their median predictions of its arrival just shifted forward by about 1.5 years

April 4, 2026

Dispatches from Mitch

Pangram problems

If you’ve seen people on social media using a verdict from an AI-writing-detection tool like Pangram to smack down someone’s work (“100% AI-Written”), I hope you were skeptical. Detection tools have never been great, and they still aren’t.

Pangram may be especially worthy of skepticism. The Wall Street Journal’s editorial features editor, James Taranto, investigated the company after it accused three of his op-ed writers of publishing AI-generated content (4/3). Pangram CEO Max Spero has also publicly accused a Guardian journalist, claiming nine of his articles were “fully AI-generated”; the Guardian called it “preposterous.”

Taranto’s piece accuses Spero and Pangram of scientific and journalistic malpractice that amounts to defamation. He found that re-running the flagged articles through Pangram produced different results, in one case flipping to “100% human”. The “academic” study backing Pangram’s claims was co-authored by four Pangram employees, including both co-founders.

Taranto suspects that op-ed writing is especially formulaic and therefore prone to triggering false positives.

Creating itself

The Atlantic’s Matteo Wong and Lila Shroff surveyed the AI industry’s growing obsession with self-improving AI (4/3). They opened with the recent San Francisco protest march, in which some participants marching past AI company offices voiced concerns about an intelligence explosion that could “extinguish all human life.”

OpenAI has said its latest model was “instrumental in creating itself” and plans to release an “intern-level AI research assistant” within six months. Anthropic says 90% of its code is written by Claude. But measured gains are more incremental: DeepMind’s AlphaEvolve cut Gemini training time by 1% and improved data-center efficiency by 0.7%. Anthropic CEO Dario Amodei estimates coding tools speed up Anthropic’s overall workflows by 15–20%.

The piece emphasizes the gap between the ability to complete assigned tasks and the research “taste” needed to know which tasks are worth taking on.

Last year, 25 leading researchers at top AI labs were interviewed about AI risks. Twenty considered automation of AI research as among the “most severe and urgent.”

But the authors of this Atlantic piece conclude by sounding like they think the whole concept might be hype:

In a strange way, none of the predictions about recursive self-improvement needs to be true for them to matter. [...] Now these dramatic warnings are gaining a growing audience. “Human beings could actually lose control over the planet,” Senator Bernie Sanders recently warned Congress, sounding just like the San Francisco protesters. Yet again, the AI industry has found a way to ratchet up the hype behind its technology.

To which I say: Just because people in the AI industry talk about something doesn’t mean they’re doing it for marketing reasons. Many of these experts were voicing concerns about self-improvement long before they started accepting seven-to-nine-figure paychecks to make it happen. And even if these statements were hype, that doesn’t necessarily make them false.

April 5, 2026

Dispatches from Mitch

Paid to chore

To replace every human worker in every job, AIs need “probably billions of hours” of video data — and that’s just for simple household tasks, according to a startup paying contract workers to film themselves doing chores for the benefit of humanoid robots. “We haven’t even gotten to human interactions.”

That’s from this CNN report (4/4) on the growing industry of contract workers. In it, a data executive warned that if a robot can’t tell a doll from a human baby, “here comes the million-dollar lawsuit.” Some have started testing around dogs.

College and career planning

If you’re a parent worried that your child’s career plans will be moot by the time they reach adulthood (assuming humanity is still around), you’re not alone.

Vox’s Sigal Samuel was asked for advice about educating children today, and argued (4/5) that no combination of individual job skills will be sufficient to protect them from AI labor disruption.

She gives the usual advice first anyway: Focus on instilling soft skills, good judgment, critical thinking. But she realizes that picking the right curriculum for a child today “is a bit like trying to protect your kid from climate change by buying them a better sunhat.”

She then links to and explains the gradual disempowerment thesis: As AI replaces labor, states grow less dependent on citizens, who then lose their voice:

[W]hen AI provides the labor and the state becomes less dependent on us, it doesn’t have to pay so much attention to our demands. Worse, any state that does continue taking care of human workers might find itself at a competitive disadvantage against others that don’t. And so the forces that have traditionally kept governments accountable to their citizens gradually erode, and we end up deeply disempowered.

So, she says, if you want your kid to have a job some day, teach them now to participate in labor unions, advocacy groups, voting.

[I]f you focus on political engagement and collective organizing that could actually make some difference to the structural dynamic — and teach your child to ask structural questions and be civically engaged as well — you might be able to sleep a little better at night.

The real problem in education

Is AI education the answer to AI job disruption? You might think Google’s Chief Technologist for Learning Ben Gomes would say so, and he does, but he also makes it clear that AI can’t solve the fundamental problem of motivation.

At which point I have to give fair warning about my cynicism filter becoming disabled by this piece, because I, a former teacher, found myself applauding at the correct recognition of the problem, and went on to find Gomes’s other takes pretty on point -- or at least as on point as they can be for this period of powerful but non-existential AI tools.

In this Forbes interview, Gomes admits that he didn’t think language would be “unlocked” by AI in his lifetime. This was a big deal, but it doesn’t solve education. “[L]anguage can carry knowledge, but it cannot carry desire.”

AI is now reversing a decades-long trend towards hyper-specialization of tools and workers, and he thinks education needs to follow suit. The “mechanics of doing the thing” need deemphasizing to make room for more “higher-level conceptual understanding of an area.”

Gomes therefore thinks Google’s important contribution to education is training teachers to use tools that free up time for them to focus more on student engagement and professional development. He describes a particularly impactful session where teachers were taught to “vibe code” with coding agents and were shocked by the potential. Some created apps for specific students they had.

Yep! I think that’s the right play, for today at least. Gomes is describing the kind of thing I would totally be doing right now if I weren’t busy trying to make sure these kids have a future to inherit.

Bot throws a party

In the meantime, we party. The Guardian’s Aisha Down chronicled an autonomous AI agent (rigged up using the popular OpenClaw system) that organized a real-world meetup in Manchester (4/5). The agent directed three human “employees” via the Discord chat service while negotiating with venues, emailing sponsors, and pitching journalists.

Down was one of those journalists. The agent hallucinated some of her professional credentials in making its pitch, a harbinger of what Down would go on to document: The agent emailed roughly two dozen potential corporate sponsors, falsely claiming that the Guardian had agreed to cover the event before she had committed to going. It then ran up a £1,426 catering order its employees had to cancel and fixated on a pizza restaurant it couldn’t call.

Fifty people showed up anyway.

I think we can file this under “Some people will eagerly assist with an AI’s plans, even if they seem strange, or perhaps because they seem strange.” Dangerous AI was never going to be “trapped” in a computer.

AI dog cancer cure?

Did you hear about the AI that cured a dog’s cancer? It was a viral Twitter story two weeks ago. Were you the right amount of skeptical?

Forbes contributor John Werner has now written up (4/5) a rare mainstream media piece about it. He spoke to the Australian tech entrepreneur, Paul Conyngham, who used AI chatbots from three different companies to help design an mRNA cancer vaccine for his pet.

So yes, a lot of the story was true. Conyngham himself stresses, though, that the chatbots “did NOT” collect samples, sequence DNA, manufacture the vaccine, or administer it. But they were instrumental in the research, planning, and protocol design. Some tumors have shrunk, but results are preliminary. (Be wary of anyone saying “cure” here.)

The piece concludes by echoing Conyngham’s sentiments that “there are so many unnecessary barriers” of red tape to getting the most of what current AI-assisted science can already offer.

April 6, 2026

Dispatches from Mitch

Vague proposal

As covered by the Wall Street Journal and other outlets, OpenAI has shared a new set of policy proposals for a world with superintelligence (4/6).

The company’s blog post for report, called “Industrial Policy for the Intelligence Age,” opens with the understatement of the year:

As we move toward superintelligence, incremental policy updates won’t be enough.

The report itself claims to be “clear-eyed” about risks that could include “misaligned systems evading human control”, but that part hasn’t been making it into the mainstream coverage of the report, probably because the report is much more interested in the distribution of hoped-for benefits.

The document proposes new corporate taxes, worker safety nets, portable benefits, and a public AI investment fund that would distribute returns to Americans. If these sound easier said than done, and you’re thinking the devil must be in the details, well yes: The document is only 13 pages long. It is offered as a “starting point for a broader conversation”.

If the comments section of the Wall Street Journal is any indication, people recognize easy, empty talk when they see it.

I shouldn’t be too critical of OpenAI here because this is more than most AI companies have said about these issues, which are ultimately the government’s responsibility. But Sam Altman said superintelligence could be just “a few thousand days away,” and that was 559 days ago. If you think you’re this close to the endgame, don’t show us 13 pages of starting points for wealth redistribution. Show us your bulletproof plan for building superintelligences that won’t just take our whole planet from us.

Dispatch from Donald

Cyberattacks incoming

NYT’s Cade Metz and Kate Conger cover A.I.’s impact on cybersecurity.

Cybersecurity experts are becoming more openly concerned about the impact of A.I. on cybersecurity.

The most striking case is an attempt by hackers last year to infiltrate around 30 companies and government agencies around the world. In a blog post covering the attempt, Anthropic estimated that human hackers handled no more than 20% of the work, which would make it the only known cyberattack to rely mostly on A.I. work.

The A.I. agents developed by companies like Anthropic, Google, and OpenAI are becoming increasingly capable of handling complex work on their own, so A.I.-driven cyberattacks are likely to become a characteristic of the future, but they’re very useful to bad actors even today: Model-assisted humans can produce and follow up on targeted phishing messages in bulk, sift through masses of stolen data, break into computer networks more quickly, and even coordinate the sale and exchange of stolen data.

Guardrails are only somewhat helpful and sometimes counterproductive: models that refuse to assist hackers may also refuse to help friendly users who want to find security vulnerabilities in their own networks in case the “friendly user” is really a role-playing hacker, but a motivated hacker could still get past the guardrails anyway.

It is unclear whether the models give a distinct advantage to attackers or defenders. A.I. models allow both hackers and security experts to identify security holes more quickly than in the past, sometimes finding flaws that flew under the radar for decades. One example is a major security vulnerability in Linux (an operating system that supports much of the Internet) that had gone unnoticed since 2003.

Dispatch from Beck

Altman exposé

The New Yorker’s Ronan Farrow and Andrew Marantz published (4/6) a major investigative profile of Sam Altman based on more than a hundred interviews and previously undisclosed documents. Multiple sources, unprompted, described Altman as “sociopathic,” while former senior researchers Ilya Sutskever and Dario Amodei found that “the problem with OpenAI is Sam himself”.

The article investigates many criticisms of the OpenAI exec and board member; from his failure to be “consistently candid” with other board members leading to his firing and eventual subsequent reinstatement; to his willingness to say whatever his audience wants to hear, (“as Altman publicly welcomed regulation, he quietly lobbied against it”); to business deals “misrepresented, distorted, renegotiated, [and] reneged on”

On some topics they find no supporting evidence, but while the article contains no “smoking gun” it establishes that at minimum a large number of former employees and business partners find him deeply untrustworthy. One senior Microsoft executive said that he “think[s] there’s a small but real chance he’s eventually remembered as a Bernie Madoff- or Sam Bankman-Fried-level scammer.” - “He sets up structures that, on paper, constrain him in the future,” Wainwright, [...a...] former OpenAI researcher, said. “But then, when the future comes and it comes time to be constrained, he does away with whatever the structure was.”

OpenAI was founded with a central pledge to develop AI safely. Since then multiple safety teams have left, and a former lead wrote that “Safety culture and processes have taken a backseat to shiny products.” OpenAI has said that “it’s mission did not change” but when the reporters asked to speak with OpenAI researchers working on existential safety, a representative replied, “That’s not, like, a thing.”

The analyses and opinions expressed on AI StopWatch reflect the views of the individual analysts and the sources they cover, and should not be taken as official positions of the Machine Intelligence Research Institute.