Dispatches from Mitch

Summit wrap-up

Just as it looked like the U.S.-China summit was ramping up, it ended with a whimper: no big deals struck, no great tensions resolved. Talks on AI seem to have been crowded out by other matters.

Coverage by TIME’s Miranda Jeyaretnam described a disconnect in what the two parties were there to do. One expert is quoted as saying the U.S. brought more of a “trade show” for American exports, while China wanted more political discussion over matters like Taiwan.

Remarks from U.S. Treasury Secretary Scott Bessent yesterday, about jointly working to secure AI from misuse by non-state actors, are now interpreted as referring to future talks with no specified date.

And despite reports that the U.S. would allow sales of high-end Nvidia chips to China (if Beijing would let companies buy them), there’s no indication of any actual agreement yet. Bessent, yesterday, had said there was a lot of “back and forth.”

That’s not to say AI chips weren’t affected by the talks. We know the U.S. asked China to apply more pressure on Iran over its closure of the Strait of Hormuz; this affects chips because the Iran conflict has pinched the flow of chemical products used in their manufacture. And Taiwan, the world’s leading chip maker, imports 90% of its energy, with 40% of its natural gas sourced from the Middle East last year.

But here, too, there’s no sign that China, the biggest buyer of Iranian oil, has agreed to do anything at all. All we have is a statement from China’s foreign ministry that the “shipping channels should be reopened as soon as possible.”

A piece from The Guardian today quotes a Chinese adviser’s official summary of the event. It says the two countries have “reached a new point of equilibrium.”

Grade inflation, boo!

If chatbot-assisted cheating in school is rampant, and mostly going unpunished, then shouldn’t average grades be going up?

They are, according to a paper from UC Berkeley’s Igor Chirikov, as reported by the Wall Street Journal. Chirikov compared grades from classes at a “large Texas public university,” dividing them into more and less “AI-exposed.” A class is more exposed if it involves more writing or coding — domains where AI is readily applied.

Looking at more than half a million grades from 2018 to 2025, Chirikov found 30% more As (vs. A- or B+ grades) in the more AI-exposed courses, starting in 2023. ChatGPT was first released in November 2022. The more take-home assignments and homework in a class, the more likely a grade was to be an “A.”

Now, this doesn’t necessarily mean the higher grades are due to cheating; some of these grades might have been earned through responsible use of chatbots as tutors. But as a former teacher and student, I’m going to have to pick the more cynical interpretation.

That said, I’m also going to give a lot of cheaters the benefit of the doubt and say they wouldn’t be cheating if it weren’t so easy and ubiquitous. In my experience, students aren’t proud to discover how readily they can be seduced by a shortcut.

This may be part of why AI is so disliked by Gen Z, many of whom are graduating this month. Perhaps you’ve seen this viral video of a University of Central Florida commencement speaker getting booed for saying that “artificial intelligence is the next industrial revolution.”

Dispatches from Donald

Musk v. OpenAI ends on a question of credibility

Elon Musk’s lawsuit against OpenAI is approaching its close (see here for our most recent post). AP News’ Barbara Ortutay and Matt O’Brien gave the most concise overview of the questions that the jurors will soon face:

Did Elon Musk file this lawsuit within the time set by the statute of limitations? If he did not, all other questions may be moot.

Did OpenAI have a “charitable” trust, and was that trust broken by its executives?

If so, did Microsoft aid and abet the breaking of that trust?

Sam Altman’s Tuesday cross-examination was covered extensively by CNBC. Musk’s attorney asked Altman if he was “completely trustworthy.” Altman first answered, “I believe so,” and then, “I’ll just amend my answer to ‘yes.’”

Zico Kolter, who chairs OpenAI’s Safety and Security Committee, testified on Tuesday about two instances in which he formally requested that OpenAI delay a model’s release. He also spoke of other occasions when safety concerns prompted him to request more information prior to a release. But Breitbart, like several of the outlets I read, was focused almost purely on interpersonal dramas: the morale and motivation of the employees at OpenAI and Musk’s management style, and whether the greatest liar was Musk or Altman.

Both sides pressed hard on the credibility of their opposition: Of Sam Altman, Musk’s attorney said, “Five witnesses in this trial, all people that he’s known for years and worked with, called him a liar under oath.” Meanwhile, one of OpenAI’s attorneys insisted that “Mr. Musk is the one whose testimony is contradicted by every other witness.” The Musk team’s closing argument to the jury included only a passing reference to AI safety.

The jury is expected to begin deliberating on Monday. Its verdict will inform Judge Gonzalez Rogers’s final decision, which could compel the payment of billions of dollars in damages to OpenAI’s charitable arm.

Generative AI in Cannes

Reuters reports from the Cannes Film Festival that the debate over generative AI in film-making has shifted from “yes or no” to “when and how.” Directors still agree that AI should not be used to replace actors or scriptwriters, but other roles in the production and post-production phases don’t merit the same protection. Director Xavier Gens framed it in terms of the eight months and more than $2 million he could have saved had he used AI to produce his 2024 shark attack film Under Paris.

As the film industry would tell it, “acting and writing” are roles performed by humans, and everything else — visual effects, dubbing, editing — is merely an automatable “task.”

Dispatches from Alana

An AI aesthetic

We often hear about artists decrying AI use and asserting that AI will never be true art.

The Hollywood Reporter’s profile of AI filmmaker Zack London is an interesting counter to that narrative. In the piece, London’s AI-generated sci-fi films are framed as a new kind of art. Quoting from the article:

At a moment when Hollywood and Silicon Valley are still arguing over what AI actually is — a cost-cutting tool, a visual gimmick or the foundation of an entirely new cinematic language — Gossip Goblin offers a more provocative possibility: that AI already has an emerging aesthetic, and that it belongs not to studios but to individuals willing to wrestle it into something personal. His films don’t reject the medium’s telltale strangeness — the dream logic, the synthetic textures, the sense of images half-remembered rather than fully observed — but lean into it, suggesting a form of storytelling that feels less like traditional filmmaking than like visualized thought.

Granted, part of the reason for this is there’s still a large human component. London uses human voice actors, scripts, shot lists, and a narrative; he’s not just inputting a prompt or pressing a button. He frames AI as a way to do more with a small budget and team, saying:

What’s exciting about AI is that it might allow people to create ambitious genre storytelling without needing hundreds of millions of dollars. Historically, if you wanted to make large-scale science fiction, you needed a massive studio production. Now a handful of people might be able to create something visually comparable with far fewer resources.

His positive reception online and in Hollywood supports this anti-slop framing, with 1M followers on Instagram and Hollywood studios and directors reaching out to discuss “what the future of filmmaking might look like.”

Parrots, consciousness, and marketing hype

Arwa Mahdawi’s humorous rebuttal (published in the Guardian) of Richard Dawkins’ assertion that Claude “may be conscious” was a fun read for the first few paragraphs. Then I started to bristle.

Citing Timnit Gebru’s famous paper On the Dangers of Stochastic Parrots: Can Language Models Be Too Big? Mahdawi said it was no wonder Dawkins got confused. Gebru had predicted this very danger: “that the coherent text generated by these models could lead people into perceiving some sort of “mind” when what they’re actually seeing is just pattern-matching and text prediction.” (Madawi’s phrasing).

Why the bristle? AI experts have repeatedly challenged the stochastic parrot framing (one example here) since predicting the next token means genuinely learning and internalizing specific patterns and then generalizing those to create new outputs and outcomes. MIRI explains why LLMs are not simply “parroting” in the post linked here.

I think it’s also important to distinguish sentience from superintelligence. An AI does not have to be conscious to do the “work” of intelligence, which breaks down into prediction and steering. An AI does not have to be conscious to exceed humans at every task across every domain. And an AI does not have to be conscious to pose extreme risk; it merely has to be able to steer the world in specific directions, something agentic models are already doing to some extent.

Gebru calls safety concerns and sentience talk marketing hype. I struggle to see how calling a technology exceedingly dangerous helps the companies building it. As my colleague Joe said recently, “You don’t hear pharma startups bragging ‘Our new drugs might cause a global pandemic! Invest in us!’” I’m inclined to agree with Joe, especially since AI leaders expressed these concerns long before labs were building AI systems ... in other words, before there was a product to hype.

The increase in existential dread and end-of-the-world talk reported by Bay Area therapists whose clients are working in AI, covered in the San Francisco Standard last month, also seems to indicate that safety concerns are sadly not a marketing ploy. I wish they were!

Dispatches from Beck

Half of New Articles?

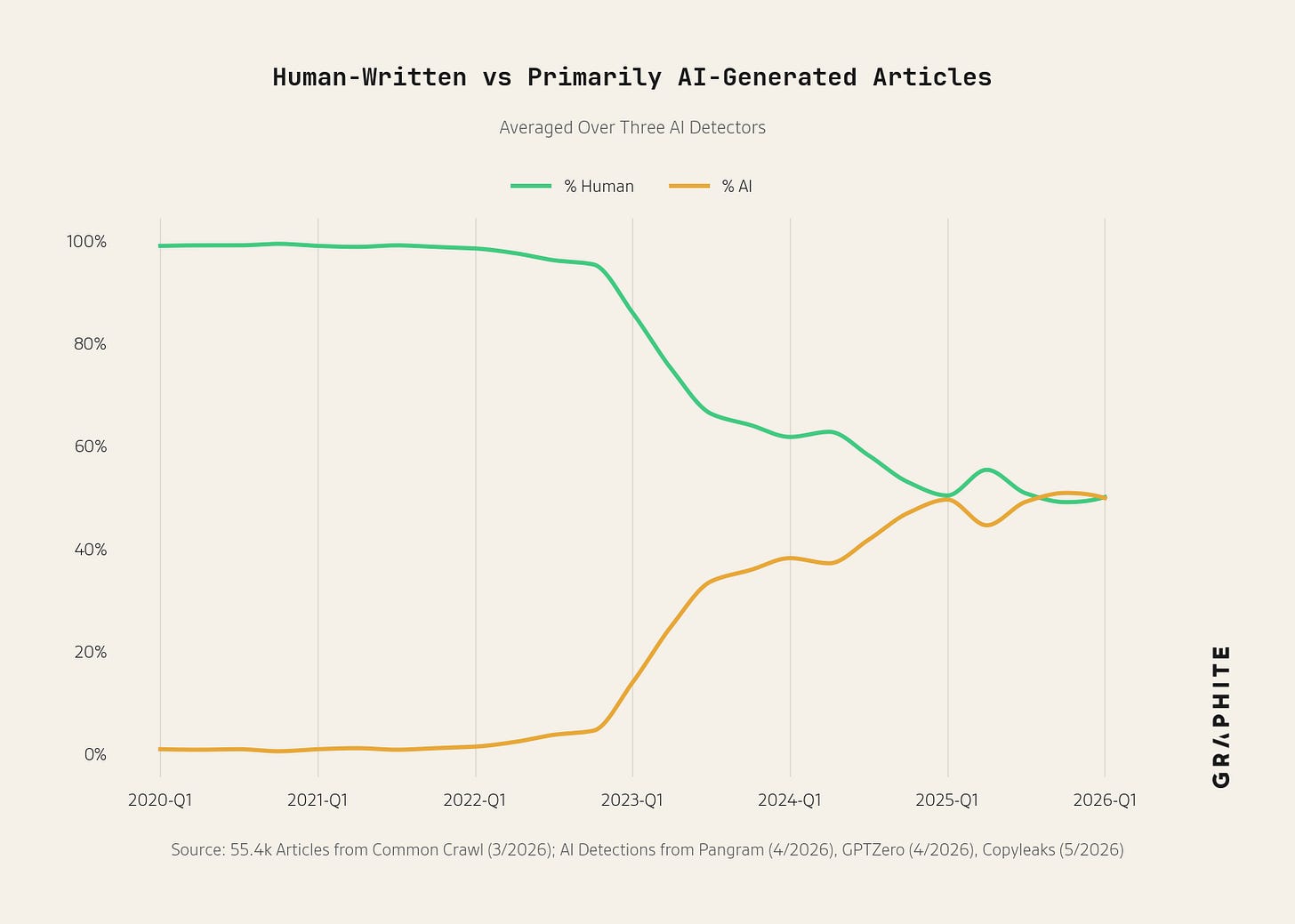

Axios reports on a new analysis by Graphite, a web and advertisement research firm, that finds AI generated about half of all new English language articles on the internet in 2025 and 2026.

Graphite’s methodology has some limitations, but it likely does capture some real information. They compare their testing tools (Pangram, GPTzero, and Copyleaks) to articles they generated with AI and to text from 2020-2022 (prior to mainstream AI diffusion). And they estimate that their false positive and negative rates are less than 2%. We don’t know how well their systems would do with articles better designed to avoid detection, or with those that use AI tooling to identify and change text that classifiers consider AI-generated.

So take the numbers with a few grains of salt, particularly the plateau around 50%. Graphite suggests that the ratio hasn’t risen above that because AI content does less well in search engines. That seems plausible but uncertain, particularly given the study’s limitations.

But even interpreted as a floor, this still tells a story of blazingly fast technological diffusion. A decade ago, the computers couldn’t string coherent sentences together; now they write half or more of all new articles online.

Dispatches from Stefan

Five days vs. half a decade

Apple spent five years engineering its newest MacOS security feature, Memory Integrity Enforcement. Researchers at Palo Alto security firm Calif cracked it with Anthropic’s Claude Mythos model in just five days. The Wall Street Journal reported yesterday about the exploit.

Defenders know the systems they’re protecting. Attackers have to figure them out from the outside. Time, knowledge, and resources have usually favored the defenders. That’s most of what’s kept people’s devices safe.

That asymmetry is closing. As Calif’s CEO explained, AI is not inventing new attack techniques. Rather, it’s applying existing techniques that used to take a team of elite specialists months to apply.

It’s not an isolated example, either. Earlier this year, Anthropic’s AI dug up more than 100 high-severity bugs in Firefox in two weeks. This would take the broader security community, per the article, about two months.

Michał Zalewski, the former Google researcher who reviewed Calif’s work, called the hype around Mythos “overblown” but acknowledged that such tools now enable “meaningful vulnerability research and code auditing.”

You can treat each new AI capability demonstration as a one-off curiosity. But cybersecurity is a domain where capability gets weaponized fast. And what used to be measured in ‘how long until someone cracks this’ is now measured in ‘how soon after the next model drops.’

The analyses and opinions expressed on AI StopWatch reflect the views of the individual contributors and the sources they cover, and should not be taken as official positions of the Machine Intelligence Research Institute.

> An AI does not have to be conscious to exceed humans at every task across every domain.

Arguably false, depending on what is meant by "conscious". (I think the other claims are fine.)