"The reason we are able to have wholesome discussions"

Summit updates, papal wisdom, doubling rates, and more

Dispatches from Mitch

Summit update

Talks in China began in earnest today. The word from Reuters is that the White House has given the go-ahead for sales of Nvidia’s H200 AI chips to select Chinese customers. These are good chips, the kind we said yesterday would be a boon to Chinese companies looking to train frontier models.

A year ago, the administration worked out a deal to receive a 25% fee for any of these chips sold to China.

But nothing is guaranteed to go through. Beijing has recently been wary of allowing Chinese AI companies to buy American chips, fearing they might be tampered with by U.S. agencies. Beijing is also trying to foster the Chinese chip industry by pressuring companies to buy domestic.

Kyle Chan, a China expert from the Brookings Institution, chatted about this reluctance to buy American (and other topics) with the New York Times’s Ross Douthat. Chan takes it as evidence that the Chinese government is (as we keep hearing from other analysts) more interested in diffusing AI across its economy and population than in chasing “something approaching an artificial superintelligence, some kind of almost machine god.” But Chan also believes that many at the Chinese AI companies are, in fact, aiming for superintelligence. And for that, they need the chips.

In any event, if staying far ahead of China is the goal of the U.S. administration, I would expect it to extract significant concessions from China in exchange for access to those chips. But I see no mention of any, at least not yet.

The administration still talks like it cares a lot about America’s lead. Treasury Secretary Scott Bessent told CNBC that “The reason we are able to have wholesome discussions with the Chinese on AI is because we are in the lead. I do not think we would be having the same discussions if they were this far ahead of us.” I can’t imagine those words doing much to promote a spirit of cooperation, so maybe he means them?

With leverage from that lead, Bessent says the two sides will be discussing best practices to keep AI out of the hands of non-state actors. He also claims to be interested in getting “the most innovation and the highest level of safety.” On the topic of America’s most powerful new models, the ones with restricted access, he says, “So we’re going to put in U.S. best practices, U.S. values on this, and then roll those out to the world.”

I’ve noticed two other wrinkles to the talks reported in the last 48 hours. One of them is Taiwan: Per the above CNBC report, Chinese President Xi Jinping emphasized at the talks that Taiwan is the most important issue for good U.S.-China relations.

More than a politically sensitive spot for China, which sees the island as its rightful territory, Taiwan is where the vast majority of the world’s high-end AI chips are produced. As argued in a Wall Street Journal op-ed today, a Chinese move to blockade or invade Taiwan would effectively cut the U.S. off even from the AI chips it makes domestically, because these require integration steps still performed only in Taiwan.

The other new wrinkle for the talks comes from OpenAI. FoxBusiness reports that the company’s vice president of global affairs, Chris Lehane, said OpenAI would support a U.S.-led global governance body for AI that would include China. He said it could resemble the International Atomic Energy Agency (IAEA), which sets safety standards and does monitoring to prevent the proliferation of nuclear materials and capabilities.

I confess to some confusion about the timing and source of the proposal. OpenAI has been careful enough about its relations with the White House that I doubt they would have proposed a new international agency during the summit without the administration’s approval. But people in and connected to the administration have recoiled at any plans to give other countries a say in America’s AI business.

My best guess is that Lehane’s proposal has less to do with diplomacy and more to do with the turf war among U.S. agencies over who gets to take the lead on testing models and setting standards. Lehane is suggesting the new governing body could be formed by connecting different nations’ AI safety institutes to the Commerce Department’s Center for AI Standards and Innovation. That could be one way of ensuring that the more business-friendly of the competing U.S. agencies ends up in charge; the alternative is the more national security-focused apparatus proposed by the Office of the National Cyber Director.

I have no problem with an international agency modeled after the IAEA. The nuclear agency has a strong track record, and something like it would be long-term useful if or when the two countries agree to end the race to superintelligence. For now, though, the race is really only between the U.S. and China — and more so between U.S.-based companies. I think the two countries’ leaders could end the race right now, if they agreed to do so, using only domestic policy and some soft power over would-be upstarts.

Dispatches from Alana

Papal wisdom

Pope Leo spoke at La Sapienza university in Rome today, condemning Europe’s rising military spending and the use of AI in warfare. (This Reuters piece emphasizes the first, this Associate Press piece the second.)

As reported by the Associate Press, the Pope is concerned about AI use in the Ukraine, Gaza, and Lebanon conflicts, stating it demonstrates “the inhumane evolution of the relationship between war and new technologies in a spiral of annihilation.” He wants better monitoring to ensure that AI “does not absolve humans of responsibility for their choices and does not exacerbate the tragedy of conflicts.”

As for the military spending, he states: “Let us not call ‘defence’ a rearmament that increases tensions and insecurity, impoverishes investments in education and health, betrays trust in diplomacy, and enriches elites who care nothing for the common good.”

I feel similarly about the notion of “winning” the AI race, a race that, in my opinion, has no winners. To attempt a papal tone: Let us not call it ‘winning’ if a country manages to create a technology that could destroy the human race. Let us not be killed by our own hubris.

Pope Leo’s stated AI concerns thus far seem to be primarily about human dignity, labor, inequality, surveillance, and warfare, at least according to Axios’s coverage of an encyclical he is poised to sign that would likely highlight AI as a key priority for the papacy. (There are possible hints of catastrophe-level concerns too, though. As we covered previously, the pope’s post on X for the 40th anniversary of Chernobyl stated the disaster “serves as a warning about the inherent risks in the use of increasingly powerful technologies.”)

Pope Leo’s predecessor Francis, while also focused on AI in warfare and its impact on individual lives, stated in 2023 that AI could “pose a risk to our survival and endanger our common home.” This is likely at least part of the reason he called for an international treaty to regulate its use.

More StopWatch coverage of papal views on AI here.

Growing opposition

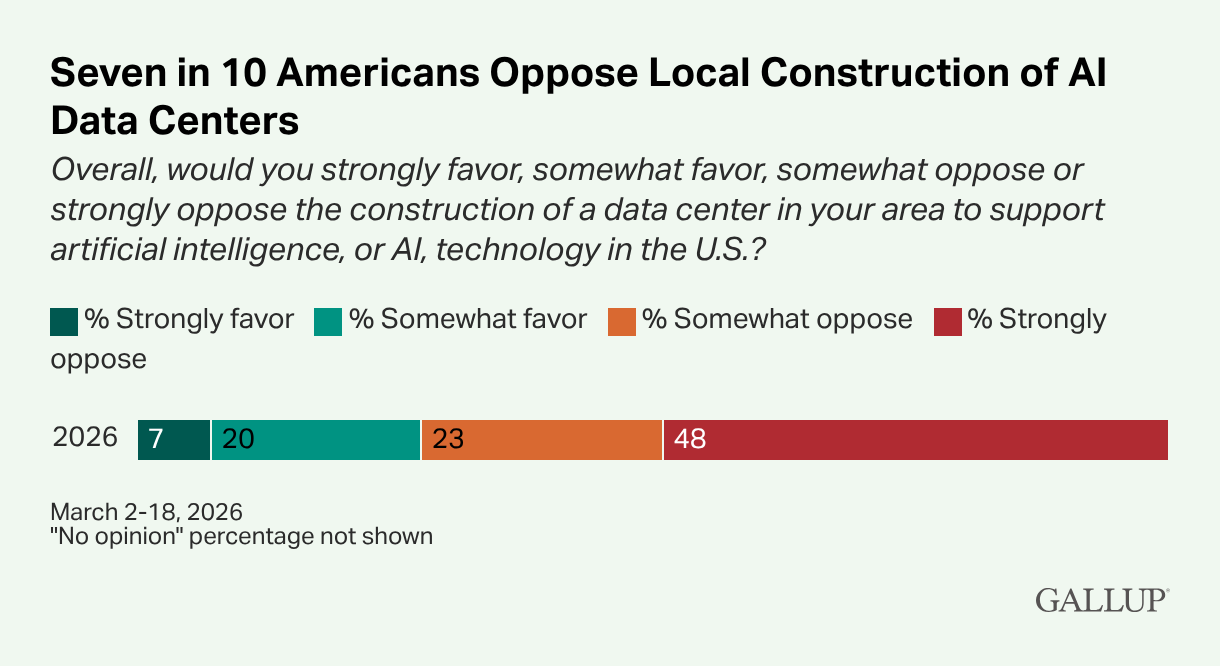

A new Gallup poll found that 7 in 10 Americans oppose data center construction in their area.

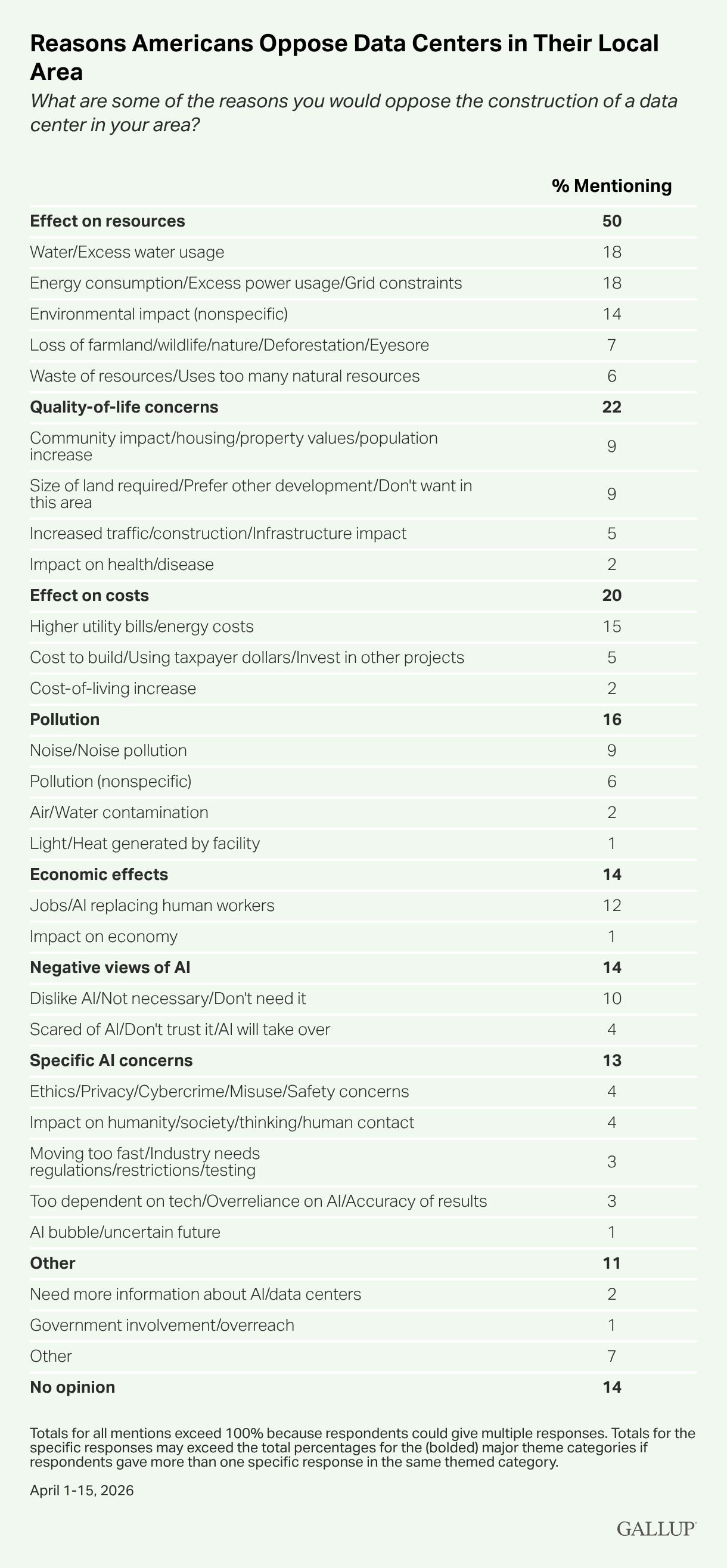

Environmental concerns were the most commonly cited reason, followed by quality of life and cost-related concerns (e.g. utility bills, taxpayer money).

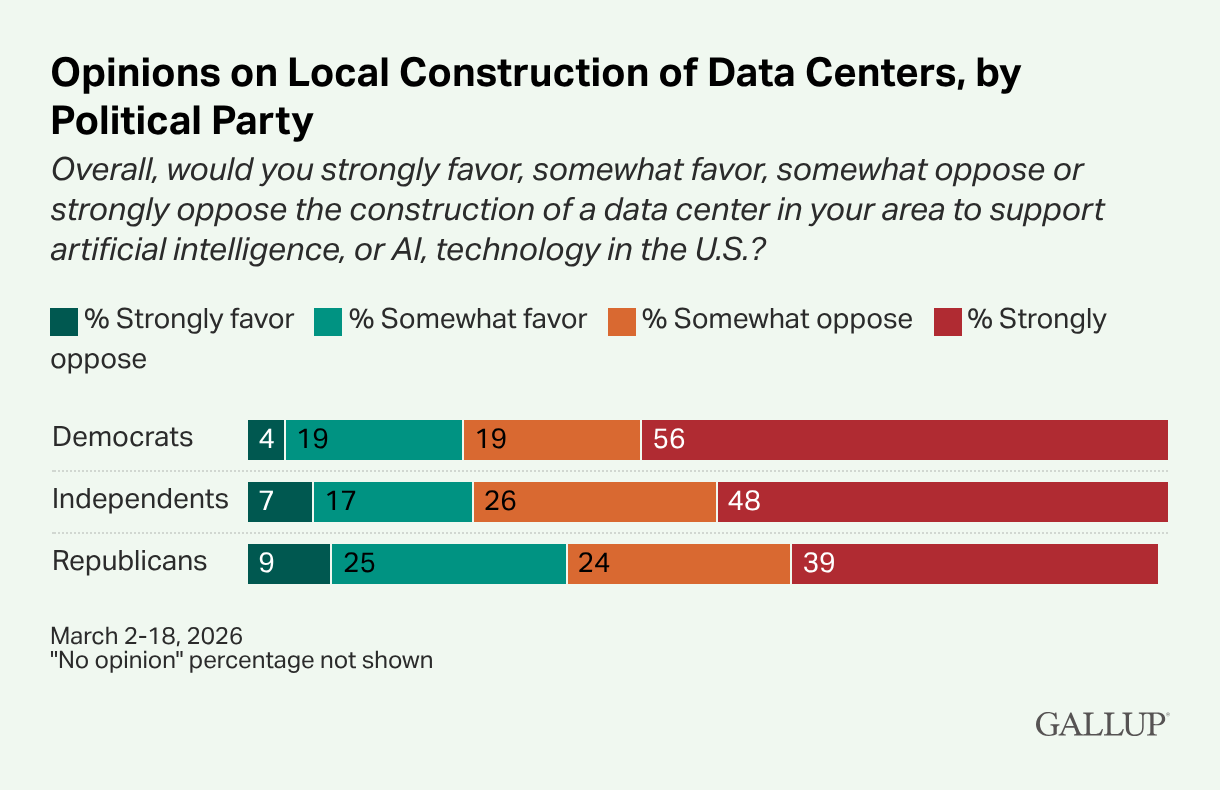

Democrats more frequently fell within the “strongly opposed” camp, though opposition was well-represented among both parties.

In comparison, the same poll found that only 53% of Americans oppose local construction of nuclear power plants, and the highest opposition ever measured on that question, which has been asked since 2001, was 63%.

While this is the first time Gallup has polled Americans about data centers, a Washington Post piece implies that opposition has increased substantially in recent years, citing the recent moratoriums on data centers, the resignation of Pennsylvania town council members over backlash on a data center plan, and reversal of a 2023 Virginia poll showing less than 1 in 4 voters opposed local data centers. In a poll last month, that has risen to 59%.

My take? The NIMBY nature of the opposition could be disheartening for those focused on existential risk. Only 4% of respondents cited “Scared of AI/don’t trust AI/AI will take over” as the reason for their opposition. Yet I personally don’t see this as disheartening at all. On the contrary, this growing opposition shows that when people understand how something will affect them directly, they will take action.

The next step is to get the word out about existential risk — because if there’s one thing that could directly affect every single person, no matter where their backyard happens to be, this is it.

Dispatches from Donald

AI hallucinations discovered in biomedical papers

CBS News’s Megan Cerullo reports on an audit of millions of published biomedical papers. More than 4,000 fabricated citations were discovered across almost 3,000 academic papers. None of the erroneous citations had been corrected or retracted.

AI hallucinations are not the only possible source for these mistakes, but AI is probably a contributing factor. The production rate of fabricated citations has increased twelve-fold over the past three years.

The audit was published in The Lancet, a medical journal. If you’re interested, you can read the report here.

AI cyber capabilities may be improving more quickly than ever

The AI Security Institute (AISI) is a research organization in the United Kingdom’s Department for Science, Innovation and Technology. They study AI, trying to evaluate risks and propose solutions. One of the things they’re studying is “doubling time,” or the time it takes for AI to become twice as good at something.

In November 2025, AISI judged that, with respect to cybersecurity tasks, the doubling time was 8 months. In February 2026, AISI adjusted this to 4.7 months. AISI recently published an article on a possible acceleration in doubling times. This follows tests that AISI ran on recently-announced AI models, Claude Mythos Preview and GPT-5.5.

One of the ways that we measure AI capabilities is by measuring “time horizons.” Last week, in a discussion about other AI metrics, my colleague Beck wrote that a time horizon evaluation “tracks tasks that frontier AI models can complete based on how long it would take a human to complete the same task.” The success criteria vary between evaluations; AISI requires an 80% success rate for an AI model to pass.

To establish the length of time required to perform cybersecurity tasks, AISI establishes a baseline by timing human experts for some tasks, and then uses human experts to make estimates for other tasks. AI models are tested on all of these tasks, and their success rates are then extrapolated to make estimates across a broad range of tasks.

In each of AISI’s tests, the models are restricted to 2.5 million tokens, each token representing a chunk of text being read or written by the AI model. This allows AISI to more directly measure any improvement in capabilities. This limits our understanding of real-world performance, however, and also means that AISI’s results fail to track the dropping price of tokens (they note this in their article). Per a paper published in March 2026, 2.5 million tokens cost just a few dollars, so this quantity is well within the reach of ordinary people, to say nothing of state-backed actors. In other words, anyone with access to one of these models can access the expertise of a cybersecurity expert for the price of a ham sandwich.

The token cap also means that we don’t have a firm upper bound on the capabilities of Mythos Preview or GPT-5.5. Performance often scales with an increased token budget. Additionally, some of the tasks were completed so well that the models probably could have accomplished even harder tasks.

AISI makes a number of hedges worth pointing out:

The tasks were not long enough to determine deterioration across longer task lengths, which is a known failure mode.

There were a limited number of tasks, especially on the longer end (only six tasks had a duration of 8+ hours), and a larger sample could have seen worse or better performance on average.

The human experts could have also performed worse or better on average, across a larger sample of tasks.

Even so, there have been definite improvements. As people often say, the models today are as bad as they are ever going to be — they’re only getting better from here. If we’re going to do something about AI, like coordinate on an international treaty to stop frontier research before we build something really dangerous, then we might not have much more time.

The analyses and opinions expressed on AI StopWatch reflect the views of the individual contributors and the sources they cover, and should not be taken as official positions of the Machine Intelligence Research Institute.

Seeing this headline right after coming here from the debate with Mr. 47f hahah