There and back again

Chinese talent flight, Musk vs Altman, roasted chatbots, and yet another ill-advised superintelligence startup

Dispatches from Joe

Get back here!

Last December, Meta (the tech company that owns Facebook) acquired a Chinese AI startup called Manus for $2 billion. Manus operates digital infrastructure, mostly “wrappers”, on top of American AI models; so it’s most likely not their AIs, but their tech talent, that Meta sought to poach.

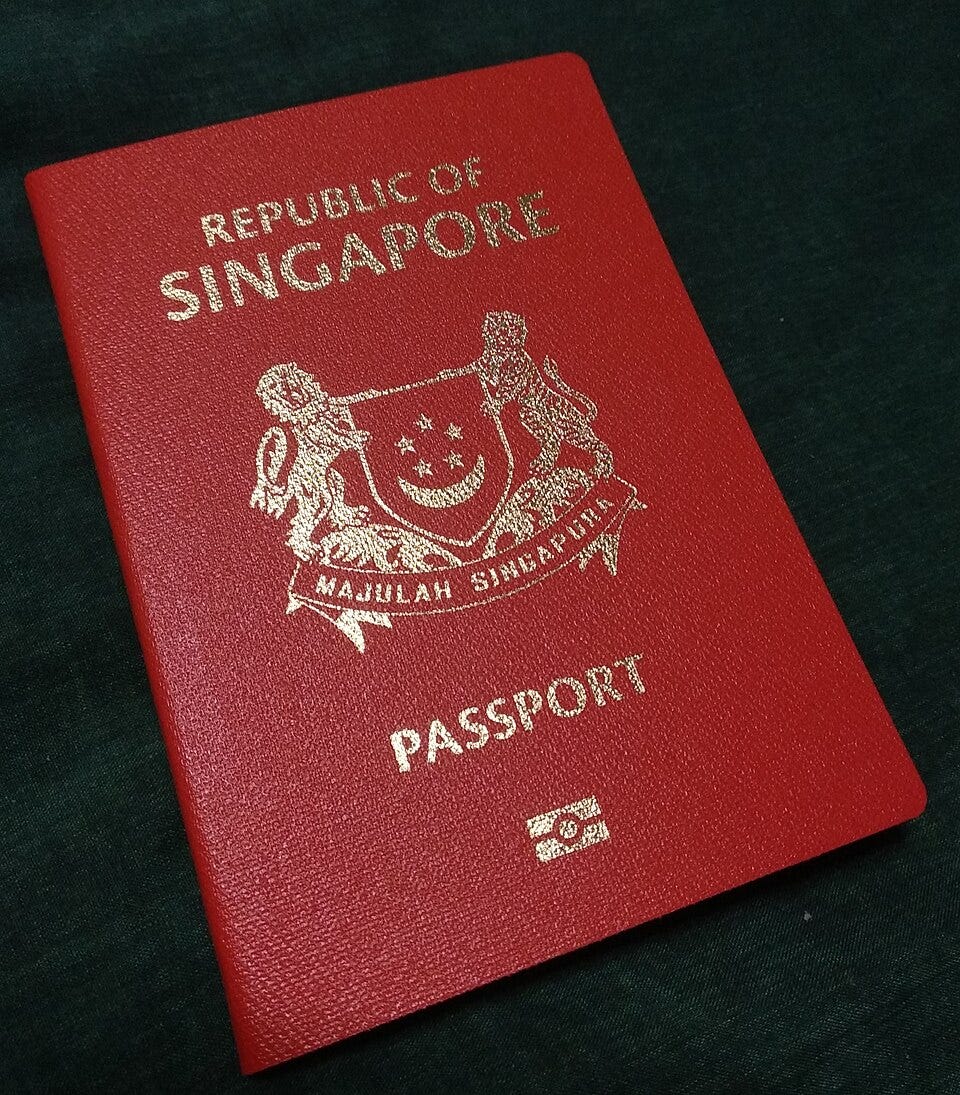

This isn’t exactly unusual; Chinese startups jump ship frequently enough that the practice has acquired its own business jargon. Moving to the U.S. has been called “China-shedding” by some, or “Singapore-washing” for those who seek refuge on Singapore’s neutral soil.

China, it seems, has had enough. Reuters reports (4/27) that Chinese regulators have ordered Meta to roll back the acquisition. This is no doubt a concerning development for the Manus staff who have been working in Meta’s offices in Singapore for months. It’s considerably worse news, one imagines, for the startup’s two cofounders, who were summoned to Beijing in March and have since been barred from leaving the country.

If you’re thinking, “They can do that?” Probably. Sort of. China likely can’t extradite researchers, but in addition to the constrained founders, it has leverage in the form of physical and intellectual property, legal arguments, and long experience applying soft power to pressure Chinese citizens abroad. It’s likely enough to force Meta and Manus to the negotiating table to try to work out a way to salvage the deal.

If you’re thinking, “But why?”, well, a good guess is that China is starting to see its AI engineers as a key strategic asset. At least one analyst has made an explicit comparison to U.S. chip export controls. This move, while extreme, sends a message to China’s AI talent that they can’t just up and leave. Perhaps China has decided the inevitable cooling effect this will have on investment is worth it.

Ineffable moves

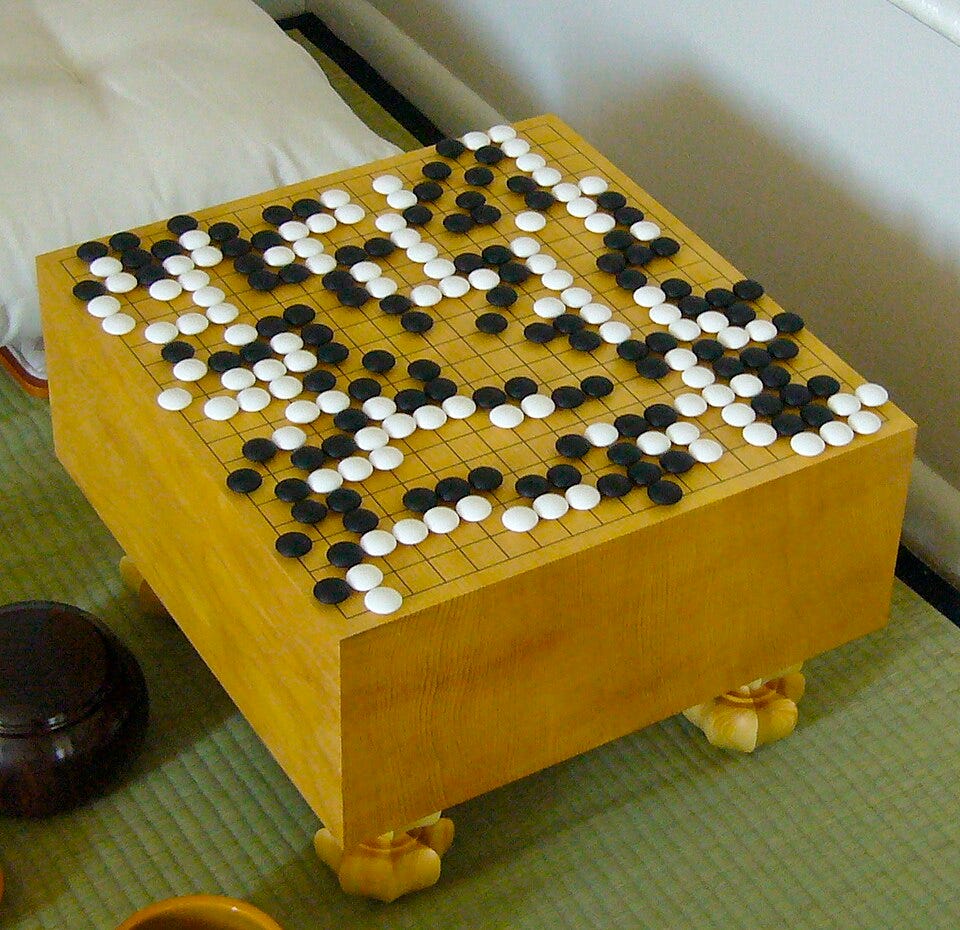

Will Knight of Wired reports (4/27) on the latest billion-dollar startup aimed at “making first contact with superintelligence”. It’s being launched by former Google DeepMind developer David Silver. A talented researcher, Silver is best known as the creator of AlphaGo, a game-playing AI that defeated Go champion Lee Sedol in a legendary showdown. Sedol was blown away:

I thought AlphaGo was based on probability calculation and that it was merely a machine. But when I saw this move, I changed my mind. Surely, AlphaGo is creative.

Silver believes the current paradigm of large language models depends too heavily on human data. His critique may have merit; a later version of AlphaGo managed to surpass prior AIs and human experts alike by playing only against itself, without ever seeing a single human game. Silver aims to build an artificial superintelligence (or ASI) the AlphaGo Zero way: teaching it to learn in a simulated environment.

Does Silver understand the magnitude of the task he is undertaking? He claims to; he has called it a “huge responsibility” and “something that has to be done for the benefit of humanity”, and has promised to donate all the money he makes from equity to charity.

Now, I’ve nothing against donating to charity; some of my best friends donate to charity. Silver might well be the friendly, brilliant, and thoughtful researcher Knight makes him out to be.

But if I may briefly ascend my weathered soapbox: There is a kind of person who names their startup “Ineffable Intelligence”, as though ineffability is a property we urgently want in our critical engineering projects; who says the main thing wrong with AI labs’ current reckless rush to build superhuman alien minds is that they rely too much on human data; and who, when pondering the immense responsibility of creating ASI himself, asks first and foremost “What will I do with all the money I’m going to make?”

Whatever else his virtues may be, that kind of person has no business making an ASI. He has no public plan to navigate this incredibly difficult challenge safely.

With two orders of magnitude less working capital than the likes of OpenAI and Anthropic, Ineffable Intelligence is probably not going to be the first to build an ASI. Unfortunately, it is only the latest in a long string of ill-advised projects, and this is going to keep happening until governments make it stop.

Dispatches from Beck

The battle for OpenAI

Elon Musk’s lawsuit against OpenAI goes to court today, NYT’s DealBook reports. The suit claims that OpenAI’s conversion to a for-profit business from a nonprofit was illegal, and Musk is seeking three rulings as redress. They argue that, the business be forced to return to a nonprofit, for $150 billion in damages (previously for Musk, now amended to go to the nonprofit), and for Sam Altman and Greg Brockman to be removed from OpenAI’s board.

Musk helped Altman finance and found OpenAI in 2015 to prevent Google from being the main player in AI, in part because of personal conflicts between then Google CEO Larry Page and Musk. Page reportedly accused Musk of speciesism, the bias towards ones own species over other moral patients, and this seems to have been the inflection point in their relationship becoming unfriendly. But Musk and Altman have also been in conflict since at least 2018 when disputes over vision (including Musk asserting that OpenAI ought to be part of Tesla) led them to part ways. Since then, Musk has started his own AI firm (xAI) but it has struggled to find success.

The lawsuit faces challenges, including questions of standing, but could substantially alter the landscape of AI development, and potentially of philanthropy. One doesn’t have to love Musk to hope that OpenAI faces some consequences for what one writer calls Potentially the Largest Theft in Human History. The company has received funding at an $852 billion valuation, and the nonprofit has been allocated shares worth $130 billion (since rising to ~$180-$220 billion). That’s a gap of more than $600 billion. That said, even if Altman and Brockman are removed from the board, their replacements would likely be cut from similar cloth and take similar risks, a worrisome consideration when the world is in the balance.

John Oliver doesn’t love chatbots

Last Week Tonight’s main story this week was on AI Chatbots. They chronicle extant harms, downstream of profit incentives and reckless deployment, including: AI sycophancy; AI mania and psychosis, rarely but tragically encouraging suicide; and the sexualization of children. These harms are real and important, and many companies act recklessly in pursuit of short term profits. But I’m worried that John is missing the forest for the ferns.

He centrally frames these models as “just next token predictors” and, perhaps, this is the central misunderstanding that drives John to frame the story as merely one of corporate malfeasance. In this worldview, LLM tragedies are just evidence of “move fast and break things” culture of “friendless” tech CEOs. I, however, view these currently misaligned models as providing evidence that models trained on gradients will pick up unpredictable motivations that become very dangerous as capabilities improve, while also being worried about those CEOs.

Humanity’s understanding of neural nets is still developing, but I’d argue that next token prediction must include “real” cognition. Sure, “2 + 2 = 4” is probably just memorized - but when an LLM sees “376 * 631 =...” the user is making a request that the neural net “do math.” It is asking it to perform meaningful manipulation of numbers by rules, and the AI has to do all the same manipulations as “real math.” Or consider that predicting what follows the text “blockades of Taiwan have led to…” is a task that inherently asks the respondent to model economics, warfare and diplomacy. Consider this just a teaser and expect ongoing coverage of model reasoning.

The analyses and opinions expressed on AI StopWatch reflect the views of the individual analysts and the sources they cover, and should not be taken as official positions of the Machine Intelligence Research Institute.