Dispatches from Mitch

Three charts to make you clench

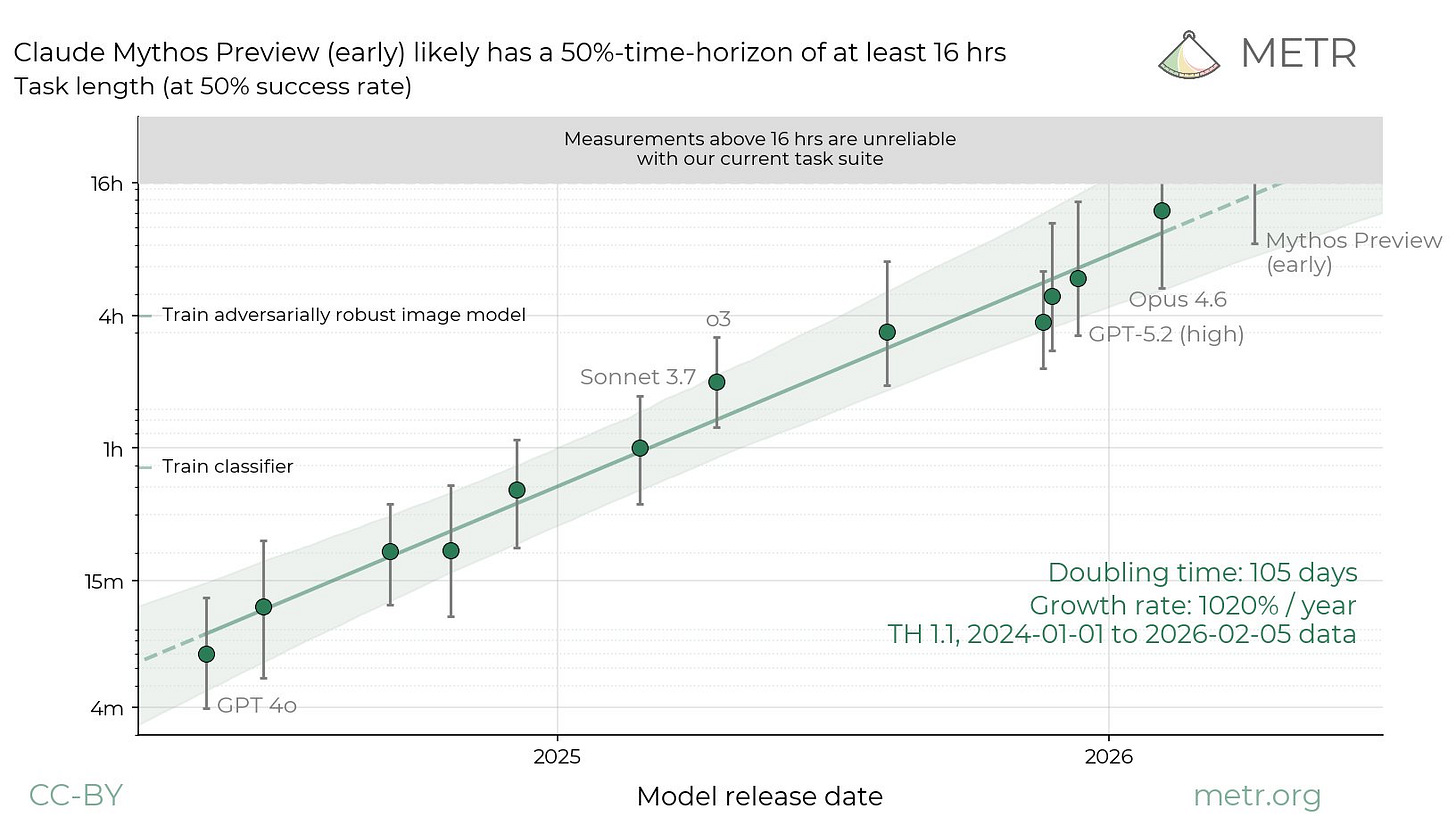

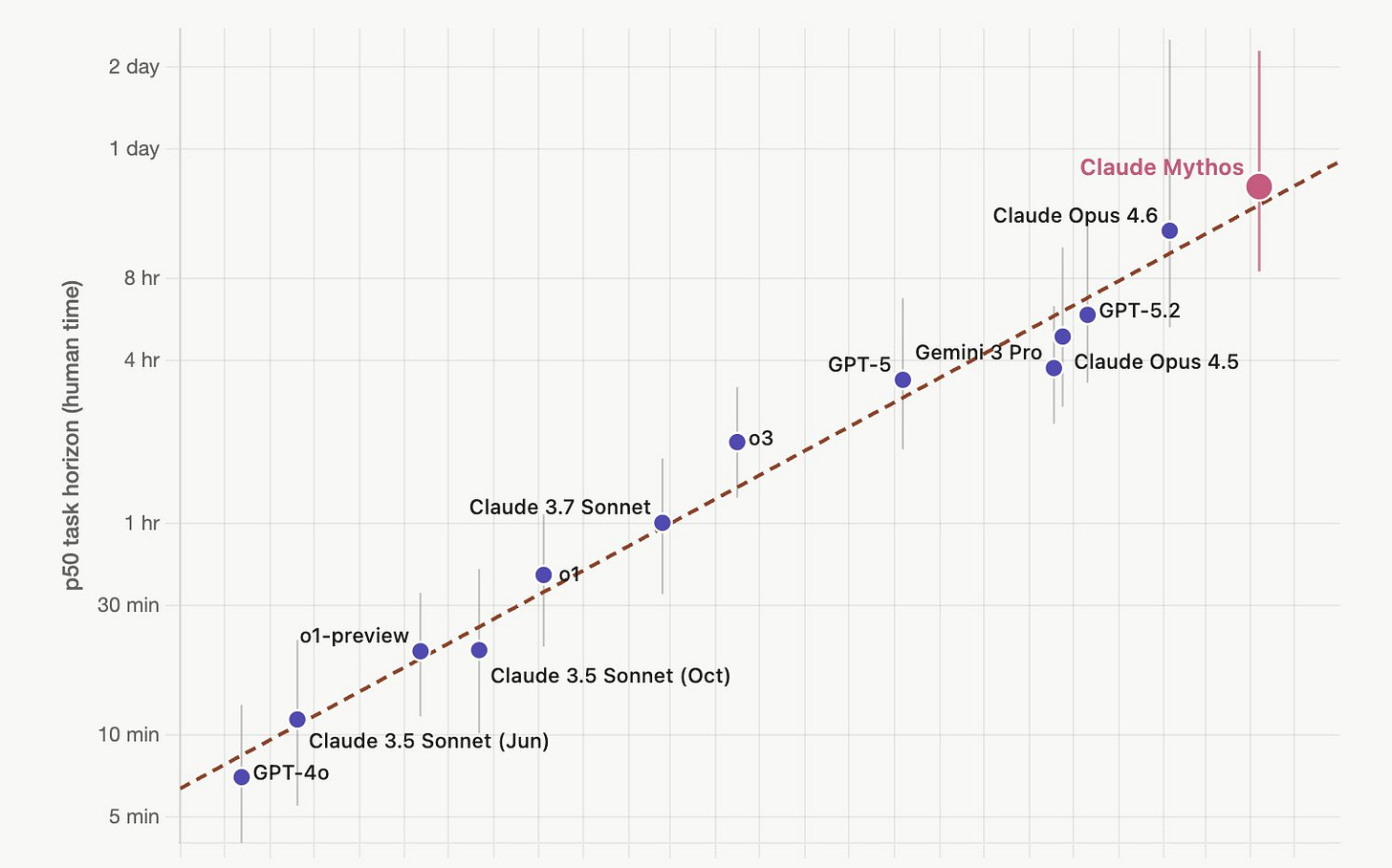

We last mentioned METR’s Time Horizon chart just four days ago. It comes up a lot in tech circles as the most widely recognized indicator of AI’s ability to write code. Writing code matters not just because it’s a monetizable skill, but because it could be an early indicator of AI’s ability to do AI development. This could kick off a self-improvement loop that makes everything go even faster.

Well, METR just put out a snapshot of what that chart looks like with a Preview version of Anthropic’s new and not-fully-released Mythos model included, and the metric is basically broken. On at least half of attempts, Mythos can complete coding tasks that would take humans more than 16 hours. METR says their testing suite struggles to make meaningful estimates at this level.

If this doesn’t have you puckering, it might be because you didn’t know that a year ago, state-of-the-art models were at roughly the 2-hour level.

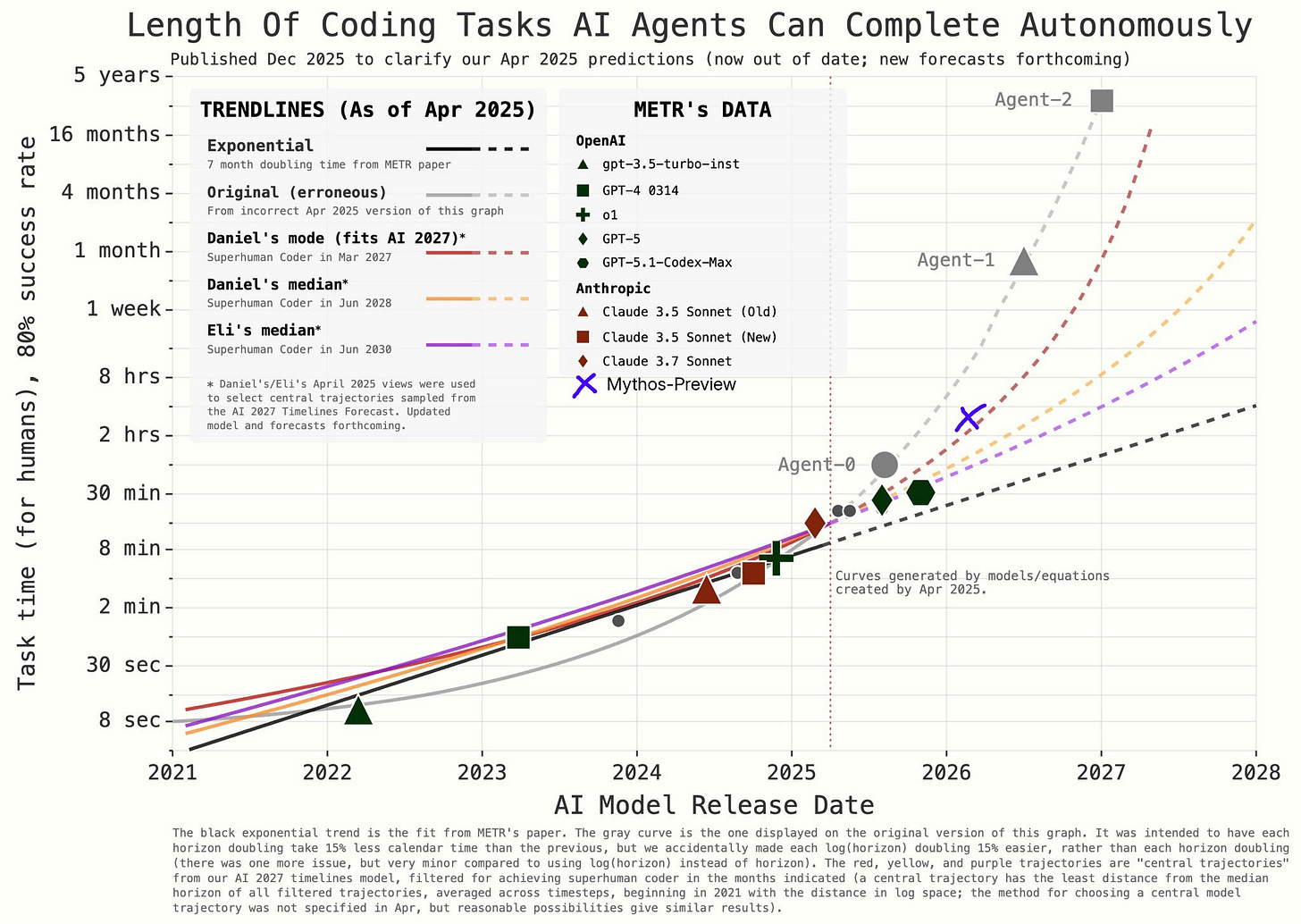

As Peter Wildeford, Head of Policy at AI Policy Network points out, this is “actually on trend” — not some outlier. But on trend is “still terrifying” because the trend is exponential and implies AI systems doing weeks-long tasks before the year is out.

A developer went viral on X (Twitter) for posting the following image where he plotted Mythos’s score on the forecast chart for the AI 2027 scenario and found it slightly above trend. He found AI spending numbers also exceeding that forecast trend.

Anything close to on-trend with AI 2027 is kind of scary. It is so-named because it anticipates AI becoming fairly transformative as early as 2027.

Before you fly

The FAA and everyone associated with airline safety software really wants you to know that they’re not using AI to do air traffic control.

That’s my takeaway from reporting by Politico about the FAA’s new SMART initiative. And, you know, fair. I can absolutely picture the media writing the kinds of sensationalist headlines they’re probably worried about.

SMART is a new AI project intended to reduce delays and minimize congestion in the air by more cleverly orchestrating things on the ground. Sometimes small changes in takeoff schedules can lead to big differences in peak traffic complexity. Done well, this would save fuel and ease the loads of overburdened air traffic controllers.

Firms are competing to lead the project, with Thales, Air Space Intelligence, and Palantir invited to participate. Any serious rollout is months away at the earliest, and there’s no budget line for it yet.

Only partially obsolete?

Reporting today by CNN’s Lisa Eadicicco breaks down statistics to say that AI is mostly only automating parts of people’s jobs, giving them some near-term measure of security.

Executive outplacement firm Challenger, Gray & Christmas says AI has been cited in more than 49,000 job cuts so far this year, making it the top-cited reason for the second consecutive month. But it’s not clear how often this is actually the reason, nor the extent to which a position has been automated versus preemptively eliminated in anticipation of being automatable.

A McKinsey partner says that AI could technically automate 57% of work right now, but this work is spread across “pieces and parts” of roles within a company.

I think there’s some truth in that, and I suspect that investors do, too. Companies are eager to declare themselves “AI native” or “AI first” or similar because it implies they are rearranging those roles so that the human parts are concentrated among a smaller number of humans.

The way to beat a smarter adversary

Looking for a longer listen to flesh out a slow Sunday for AI news?

Let me recommend this 95-minute interview our own Nate Soares had on Alex O’Connor’s YouTube channel a couple weeks ago.

Soares, of course, is MIRI’s president and co-author of the New York Times Bestseller If Anyone Builds It, Everyone Dies.

O’Connor is trying hard to understand what would drive superintelligent AI to kill us, where those drives would come from, and why current methods don’t let you pick different drives instead. These are great questions that are hard to address in shorter formats.

In this interview, you can hear Soares patiently coming at O’Connor’s confusions from a bunch of different directions, working out new analogies on the fly. Tune in to hear why we can’t expect Tom Cruise to be able to punch the mainframe when AI goes rogue, and what the “soldiers of the Ant Queen” would say if they saw us falling in love, laughing at jokes, and doing other obvious-to-us things that give our lives meaning.

If only we could sit down with everyone like this!

Hang on until the last 20 minutes for a down-to-earth policy chat. I like what Soares has to say about the bright red lines people are waiting for:

[1:25:24] You know, people used to say that the red lines were things like the AI trying to deceive the humans.

And then, you know, that red line came and went, you know.

Demis Hassabis of Google was like, “Oh yeah, deception is my red line.”

At that point, we’ve sort of really got to pull back.

And now, you know, we’ve, we’ve seen AI chains of thought where they’re like, “Oh, I’m being observed. How am I going to sort of like get this, this answer past the humans.”

Part of why that doesn’t stop things is that the first cases where it happens are sort of the most ambiguous cases — the cases where it’s least clear whether this AI is roleplaying versus being deceptive for reasons of having a goal that it can tell is in conflict with the humans. And the first times it’s happening, it’s sort of like, most it’s like pretty likely that it’s doing something a bit more like roleplaying. But part of the issue here is that what we imagine are red lines in fiction are sort of like crossed the first time as these murky brown lines.

And then we take like another step into the murky brownness.

So we take another step into the murky brownness and it gets redder and redder as we go along, but there’s actually not like a bright, clear red line anywhere.

The analyses and opinions expressed on AI StopWatch reflect the views of the individual contributors and the sources they cover, and should not be taken as official positions of the Machine Intelligence Research Institute.